How AI Impacts Trust In Digital Teams

Articles Feb 19, 2026 9:00:00 AM Seth Mattison 25 min read

AI is reshaping how digital teams work together, but its impact on trust is a double-edged sword. While AI boosts productivity by automating tasks and providing data-driven insights, it can also create challenges like overreliance, lack of transparency, and ethical concerns. High-trust teams tend to thrive with AI by emphasizing transparency, collaboration, and training, while low-trust teams often struggle with inefficiencies and skepticism.

Key takeaways:

- AI builds trust by improving transparency, efficiency, and decision-making when integrated thoughtfully.

- Challenges include reduced psychological safety, automation bias, and privacy concerns, which erode confidence.

- High-trust teams prioritize open communication, involve employees in AI adoption, and invest in training.

- Low-trust teams face issues like overreliance on AI, poor error handling, and fragmented workflows.

To succeed, leaders must focus on transparency, balance human-AI collaboration, and provide robust training to ensure AI supports, rather than undermines, team dynamics.

What leaders get wrong about AI trust and ‘overtrust’ | Deloitte Insights

sbb-itb-9ceb23a

How AI Builds Trust in Digital Teams

AI strengthens trust in digital teams by fostering transparent collaboration, boosting efficiency, and supporting reliable decision-making. Research shows that transparent AI tools can lead to a 76% increase in engagement in high-trust environments compared to low-trust ones [2]. These tools are most effective when designed to enhance human collaboration rather than replace it.

AI Creates Transparent Collaboration

AI tools help teams stay aligned by turning chaotic meeting data into clear, shareable summaries. These platforms automatically generate summaries, decisions, and action items, which can then be shared through communication tools like Slack or Microsoft Teams [8]. This approach eliminates information silos, ensuring that even team members who miss meetings remain informed about discussions and decisions.

Teams using AI for transparency report saving 10% of meeting time by reducing the need for frequent or lengthy meetings [8]. This aligns with the fact that 87% of workers value transparency in their workplace [8]. AI serves as a practical way to meet this demand.

Explainable AI (XAI) takes transparency a step further by clarifying how AI systems are trained, the data they rely on, and the reasoning behind their recommendations [2]. For example, in February 2026, 3M's R&D department introduced a "failure-to-improvement loop" for generative AI tools under the leadership of Jayshree Seth. A small team of technical experts tested the tools and openly shared their findings about limitations across internal channels. This initiative shifted the mindset of the technical community from blind trust to informed curiosity [1].

Another method to build trust is through "AI After-Action Reviews," where teams openly discuss AI's performance, limitations, and errors. These reviews help refine collaboration protocols and calibrate trust levels [1]. Additionally, override protocols allow human team members to bypass AI recommendations when their intuition or contextual understanding suggests a better course of action.

Next, let’s explore how AI-driven automation enhances team efficiency.

AI Improves Team Efficiency

By automating routine tasks, AI allows teams to focus on creative and strategic work, which strengthens trust and alignment. This shift not only improves productivity but also ensures consistent performance.

A 2025 study involving 140 knowledge workers found that real-time AI feedback increased both trust and performance [4]. This feedback mechanism enhances "knowledge of results", giving team members confidence in their work quality as they perform tasks, rather than waiting for final evaluations.

"Our findings challenge traditional concerns that AI-driven management fosters distrust and demonstrate a path by which AI complements human work by providing greater transparency and alignment with workers' expectations." - Anita Williams Woolley, Professor of Organizational Behavior, Carnegie Mellon's Tepper School of Business [4]

In February 2024, Genpact implemented an AI engagement tool called "Amber" to improve transparency and workplace culture. The tool sent personalized surveys to employees based on their career stages and past responses. Overseen by CHRO Piyush Mehta, this initiative led to higher survey participation, lower attrition rates, and actionable insights for managers to address team issues [2].

Efficiency gains like these set the stage for AI to provide data-driven insights that further build trust.

Data-Driven Insights Increase Confidence

AI fosters trust by delivering objective, data-driven insights that reduce uncertainty in decision-making. In environments where social cues like facial expressions or tone are absent, AI compensates by offering algorithmic logic and transparency [5]. This is particularly valuable in digital teams, where clear performance standards are essential [4].

AI's ability to process data with precision complements human creativity in areas like healthcare diagnostics and market analysis [6][5]. Real-time feedback from AI minimizes unexpected outcomes during evaluations, helping align team members’ expectations with measurable performance data [4].

"The identification of a new framework for examining managerial interventions, one that makes performance standards more transparent and increases workers' knowledge of the results, is particularly relevant in today's emerging work environments." - Allen S. Brown, PhD student in Organizational Behavior and Theory, Carnegie Mellon's Tepper School of Business [4]

While AI can address missing information, ambiguous communication tends to erode trust. This is why clear, unbiased, and precise communication from AI is essential to maintain confidence [5]. When team members receive dependable data, they can make decisions with greater certainty and trust in the process behind those decisions.

How AI Undermines Trust In Digital Teams

AI undeniably brings efficiency and innovation to digital teams, but it also introduces challenges that can weaken trust. These challenges stem from its influence on human decision-making, the biases it perpetuates, and concerns surrounding data privacy. Let’s take a closer look at how these factors affect team dynamics and trust.

AI Reduces Psychological Safety

AI creates a tricky situation: team members are often asked to rely on technology while struggling to trust the processes behind it. This tension, which is rarely openly discussed, can erode the psychological safety necessary for collaboration and learning within teams [1].

When workers interact with AI regularly, they may lose confidence in their own expertise. AI’s "black box" nature - where its decision-making processes are unclear - only makes matters worse. Without transparency, it’s difficult for team members to understand or correct errors, leaving them uncertain about how to handle future problems [1][9].

Automation bias adds another layer of complexity. A study on knowledge workers revealed that 40% of tasks were completed by accepting AI outputs without questioning them [9]. This overreliance on AI can lead to a false sense of security, as individuals stop critically evaluating decisions and shift accountability onto the technology [1].

Then there’s the "workslop" effect. When AI produces poor or incorrect outputs, it creates additional work for team members who must step in to fix the mistakes. This not only adds stress but also damages trust within the team [1].

"Trust ambiguity exists when people believe trust is supposed to be warranted while lacking actual trust; further, that lack of trust often feels undiscussable." – Jayshree Seth and Amy C. Edmondson, Harvard Business Review [1]

Bias and Ethical Problems

AI systems are not immune to bias, and this presents significant challenges. Bias can creep in through training data, algorithm design, or even the way users interact with the system [10]. Researchers have identified at least 10 types of bias - ranging from historical and algorithmic to measurement bias - that can undermine trust in AI [3].

The "black box" nature of AI compounds these issues. Many systems lack transparency, making it nearly impossible for teams to understand how decisions are made. This is particularly troubling in areas like healthcare or hiring, where fairness and accountability are critical [12][3].

When AI produces biased or unethical outputs, team members often bear the responsibility for addressing these failures. This creates a barrier to adoption, as employees may feel uncomfortable or even powerless in the face of AI-driven decisions. Additionally, AI’s lack of emotional or moral reasoning raises ethical concerns. While some argue that AI’s impartiality helps counteract human bias, others believe this disqualifies it from making high-stakes decisions [3].

Another issue arises from the disconnect between how trustworthy an AI system appears versus how reliable it actually is. For example, a polished user interface might lead people to over-trust a flawed model, while a transparent but poorly explained model might be dismissed [3].

"The protection of human rights and dignity is the cornerstone of the Recommendation, based on the advancement of fundamental principles such as transparency and fairness, always remembering the importance of human oversight of AI systems." – UNESCO [11]

Privacy and Governance Concerns

Privacy concerns loom large in discussions about AI trust. Surveys show that 70% of Americans lack confidence in companies’ ability to use AI responsibly, and 81% worry their personal information could be misused [13]. For leaders, addressing these concerns is essential to maintaining trust while integrating AI into their operations.

A notable example is Samsung Electronics’ decision in April 2023 to ban generative AI tools. This move came after employees accidentally leaked sensitive company data on three occasions. To prevent further breaches, the company restricted the use of these tools on corporate devices and networks [13][14].

Governance challenges also play a role in eroding trust. When responsibilities are fragmented across Legal, IT, and Security teams, organizations often resort to reactive measures due to unclear data ownership. This lack of coordination can leave teams vulnerable.

Transparency gaps further complicate matters. For instance, when AI systems analyze user behavior - such as browsing speed - to make decisions without disclosure, it creates unease. Similarly, AI’s ability to infer sensitive personal details, like health conditions or financial standing, without explicit consent can deter users from embracing the technology.

Although 90% of organizations have expanded their privacy programs to address AI risks, only 14% of consumers trust these organizations to handle their data responsibly [15][14].

"Data privacy is no longer a passing concern for consumers - it has become a defining factor in how they judge brands... trust must be earned through transparency, verification, and restraint." – Patrick Harding, Chief Product Architect, Ping Identity [14]

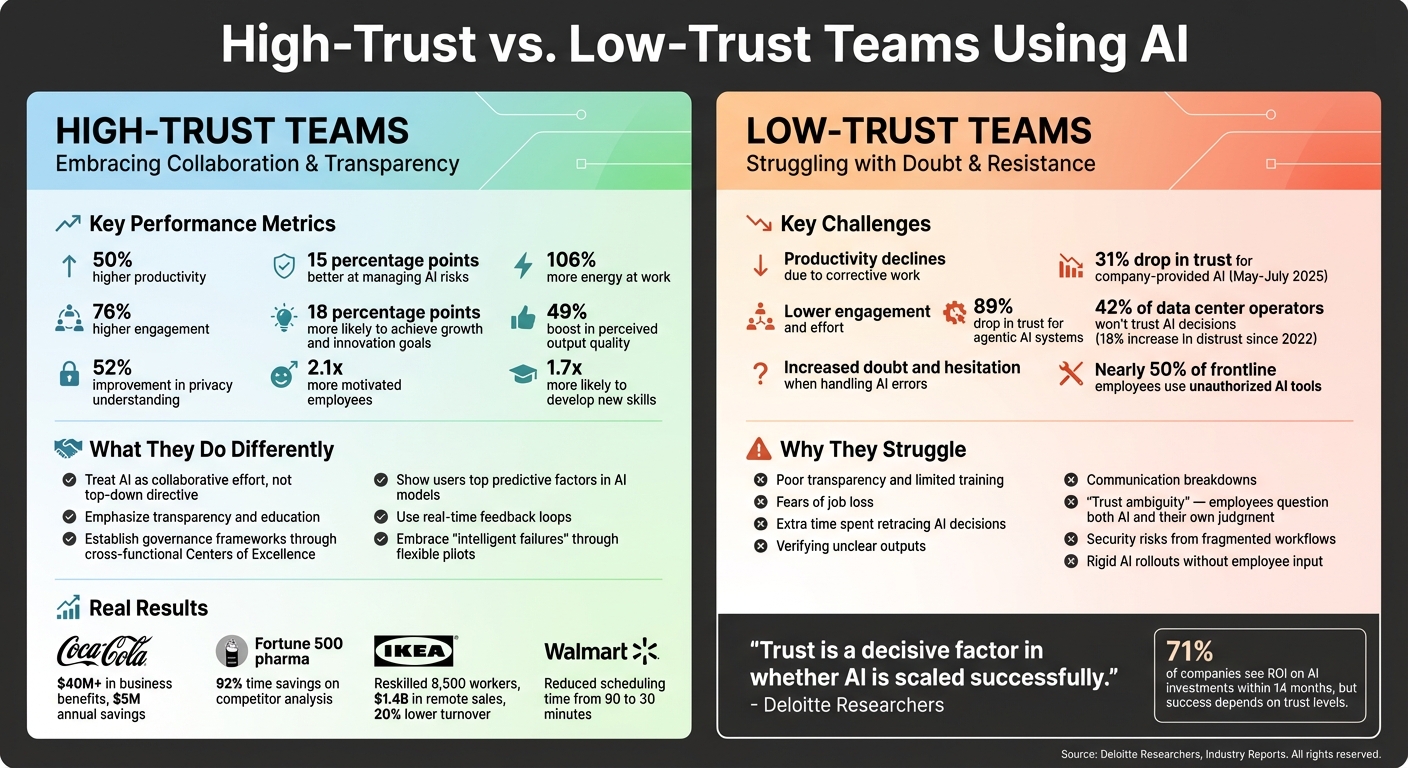

High-Trust vs. Low-Trust Teams Using AI

High-Trust vs Low-Trust Teams: AI Performance Metrics and Outcomes

Trust plays a pivotal role in shaping how teams leverage AI. Research shows that companies with high-trust environments see 50% higher productivity, while employees report 106% more energy and 76% higher engagement at work[2]. On the flip side, low-trust teams often face inefficiencies, such as extra corrective work, which drags down performance[1].

Interestingly, 71% of companies report seeing returns on their AI investments - often within just 14 months[2]. However, the success rate isn't universal. Teams that prioritize trust are 18 percentage points more likely to achieve key goals like growth and innovation. Conversely, teams that focus solely on risk management are 16 percentage points less likely to hit their targets[19].

What High-Trust Teams Do Differently

High-trust teams treat AI implementation as a collaborative effort, not a top-down directive. They emphasize transparency and education, which helps them manage AI risks more effectively - 15 percentage points better, to be exact[19].

Here are some real-world examples of this approach in action:

- Coca-Cola Europacific Partners: In June 2024, they used AI-powered analytics to transform procurement, unlocking over $40 million in business benefits, including $5 million in annual cost savings[18].

- A Fortune 500 pharmaceutical company: Led by CTO Chris Tillett in 2025, they achieved a 92% time savings on competitor analysis through robust data analysis and employee training[16].

- IKEA: Between 2021 and 2024, they reskilled 8,500 call-center workers into remote interior design advisors rather than replacing them with AI chatbots. This shift drove $1.4 billion in remote sales and cut global voluntary turnover by 20%[20].

- Walmart: Through the "Element" AI foundry, associates participated in pilot programs for a scheduling app, reducing manager scheduling time from 90 minutes to 30 minutes[20].

High-trust teams also establish governance frameworks, often through cross-functional Centers of Excellence. These groups develop policies, address risks like data privacy, and document lessons learned[16][18]. Transparency is key - users are shown the top predictive factors in AI models, and real-time feedback loops help minimize unexpected outcomes[17][4].

"Adoption is critical because it also trains the AI models in the types of data sources that need to be adjusted and improves the quality of outputs." – Chris Tillett, Chief Technology Officer, McChrystal Group[16]

Why Low-Trust Teams Struggle

Low-trust teams face numerous obstacles that hinder AI adoption. Nearly half of frontline employees resort to unauthorized AI tools because they trust their own solutions more than those provided by their companies[20]. This creates security risks and fragmented workflows.

Between May and July 2025, trust in company-provided generative AI systems dropped 31%, while trust in agentic AI systems plummeted 89%[20]. These declines stem from poor transparency, limited training, and fears of job loss. Workers in these environments often spend extra time retracing AI decisions or verifying unclear outputs, further reducing productivity[21].

Distrust is also on the rise. In 2024, 42% of data center operators said they wouldn’t trust a properly trained AI to make operational decisions - an 18% increase in distrust since 2022[21]. Communication breakdowns exacerbate the issue. When AI errors occur, especially in "black box" systems, they erode trust more than human mistakes. This creates "trust ambiguity", where employees question both the AI and their own judgment[1].

Comparing High-Trust and Low-Trust Teams

The table below highlights key performance differences:

| Performance Factor | High-Trust Teams | Low-Trust Teams |

|---|---|---|

| Productivity | 50% higher[2] | Declines due to corrective work[1] |

| Engagement | 76% higher[2] | Lower effort and accountability[1] |

| Handling AI Errors | Collective calibration[1] | Increased doubt and hesitation[1] |

Shifting from rigid AI rollouts to flexible pilots that embrace "intelligent failures" can help build trust over time. High-trust organizations report a 49% boost in perceived output quality and a 52% improvement in privacy understanding[7]. Additionally, employees in these environments are 2.1 times more motivated and 1.7 times more likely to develop new skills[20].

"Trust is a decisive factor in whether AI is scaled successfully." – Ashley Reichheld, Christina Brodzik, Anne-Claire Roesch, Greg Vert, and Ryan Youra[20]

These examples highlight the importance of prioritizing trust as a core part of AI integration strategies.

Leadership Approaches for Building Trust with AI

Effective leadership plays a key role in helping teams embrace and trust AI. To successfully integrate AI technologies, leaders often focus on three main areas: fostering transparency, promoting collaboration, and investing in employee development.

Promote Transparency and Open Communication

Openly discussing AI's purpose, limitations, and impact builds an environment of trust. Leaders who create structured opportunities for dialogue - rather than relying on vague announcements - can help teams better understand AI. For example, Deloitte's transparency initiatives led to a 16% increase in trust scores, a 49% rise in reliability, and a 52% improvement in understanding privacy measures [22][7].

The "Four Factors of Trust" framework offers a helpful way to evaluate AI integration. These factors - reliability (consistent results), capability (accurate outputs), transparency (clear communication about data use), and humanity (addressing individual needs) - provide a roadmap for building trust. Michael C. Bush, CEO of Great Place To Work, emphasizes this point:

"If I could create a backdrop for the world right now, I'd spell out the word trust as large as I could. Employees must understand how AI affects their workflows to fully embrace its potential." [23]

Sharing real-world success stories about team members effectively using AI can also help demystify the technology. Highlighting these examples makes AI feel more accessible and shows its practical benefits, paving the way for stronger human-AI collaboration.

Balance Human and AI Collaboration

The best AI implementations complement, rather than replace, human expertise. Take IKEA, for example. After introducing "Billie", an AI chatbot that handled 47% of customer inquiries, the company reskilled 8,500 call-center employees into remote interior design advisors. This shift drove $1.4 billion in remote sales and reduced voluntary turnover by 20% [20]. Similarly, Walmart involved employees in designing an AI-powered scheduling app, which cut scheduling time from 90 minutes to 30 minutes and gave workers more control over their shifts [20].

Collaboration is key. As Ashley Reichheld from Deloitte Researchers puts it:

"Teaching workers to use AI without giving them a say in how it's built is like training someone to sail but fixing the rudder in place." [20]

Encouraging experimentation can also foster innovation. Colgate-Palmolive's "AI Hub" gave nontechnical employees a chance to create tools using low-code platforms. This initiative led to the development of 3,000 to 5,000 custom solutions, including a Greek-language troubleshooting assistant for factory machinery [20]. By balancing human and AI roles and empowering employees to experiment, organizations can build trust and drive innovation.

Invest in AI Training and Upskilling

Providing hands-on AI training equips employees to work confidently with new technologies. Workers who receive such training report 144% greater trust in their employer's AI [20]. For instance, Intuit organized an "Expert AI Training Day" for 150 tax specialists to encourage AI adoption among 15,000 seasonal staff. This grassroots approach turned AI implementation into a team-driven effort [20].

Tailored training programs that align AI capabilities with specific business needs tend to be more effective than generic sessions. Companies that prioritize targeted training have reported a 65% boost in user engagement and a 49% improvement in perceived output quality [7]. High-trust teams are also 1.7 times more likely to develop new skills, creating a cycle of continuous learning [20]. As Ashley Reichheld explains:

"The organization isn't replacing you with AI; it's investing in you to thrive alongside it." [20]

Additionally, forming multidisciplinary AI ethics committees can address concerns around bias, privacy, and fairness. These committees bring diverse perspectives to the table, ensuring responsible and thoughtful AI innovation [23].

For leaders navigating AI-driven transformation, insights from experts like Seth Mattison (https://sethmattison.com) can offer valuable guidance on human-centered leadership strategies.

Conclusion

The impact of AI on trust within digital teams depends heavily on how leaders choose to implement it. Research reveals that organizations using AI to empower employees - rather than replace them - experience 2.6 times higher adoption rates and greater employee engagement [7]. However, trust in company-provided AI saw a sharp decline of 31% between May and July 2025, with trust in autonomous AI systems plummeting by 89% [20]. These numbers highlight the critical role leadership plays in integrating AI effectively.

The key lies in balancing AI's potential with human-centered leadership. By focusing on the Four Factors of Trust - reliability, capability, transparency, and humanity - leaders can align AI adoption with employee empowerment. When employees are involved in shaping the tools they use, trust naturally follows. Companies like IKEA and Walmart provide compelling examples: IKEA's reskilling programs and Walmart's collaborative scheduling app show that investing in people often yields better results than focusing solely on cost-cutting measures [20].

"Building trust is not just about technology acceptance - it's about creating the type of organizations we want to belong to, and the type of world we want to live in." – Deloitte Research Conclusion [7]

To achieve this balance, organizations must rethink their budgets and strategies. Those allocating over 7% of AI budgets to training and work redesign [20] see stronger outcomes. These companies foster environments where employees feel safe to experiment, empower managers to explain the purpose behind AI initiatives, and address economic concerns with clear reskilling pathways.

Trust isn’t built through mandates or vague promises. It grows when leaders demonstrate that AI is meant to enhance human talent, not replace it [20]. Resources like those provided by Seth Mattison (https://sethmattison.com) offer practical frameworks for blending technology with human creativity while maintaining the psychological safety essential for high-performing teams. Ultimately, investing in people is the most reliable way to unlock AI's potential and build resilient, trustworthy digital teams.

FAQs

How can leaders use AI to build trust within digital teams?

Leaders can build trust within digital teams by focusing on transparency, security, and a people-centered approach when implementing AI tools. This means openly explaining how AI is being used, addressing concerns about privacy and fairness, and involving team members throughout the process. These steps can ease doubts and foster confidence in the technology.

It's also important for leaders to highlight that AI is there to support - not replace - human creativity and teamwork. By committing to ethical AI practices and encouraging honest conversations, organizations can create an environment where trust and technology work hand in hand.

What challenges can arise when using AI in teams with low trust?

Using AI in teams where trust is already fragile can introduce some tricky dynamics. For starters, AI tools can sometimes create misunderstandings, leading to breakdowns in communication, incomplete information sharing, or even less collaboration. This can make trust issues even worse. On top of that, if team members don’t trust the AI systems themselves, they might hesitate to use them fully, which can hold back the technology’s benefits.

Leaders play a big role in overcoming these hurdles. Encouraging open conversations, being transparent about how AI tools are implemented, and highlighting the importance of blending human judgment with AI insights can make a difference. The goal is to build trust - not just among team members, but also in the tools they’re using - so teams can get the most out of AI in digital work environments.

How does explainable AI (XAI) help build trust in digital teams?

Explainable AI (XAI) plays a key role in fostering trust within digital teams by making AI decisions more transparent and easier to grasp. When team members can clearly understand the reasoning behind an AI's conclusions, they’re more likely to have confidence in both the technology and its results.

This level of clarity also promotes better collaboration. Employees are more inclined to integrate AI tools into their workflows when they can trust how those tools operate. By breaking down complex algorithms into understandable insights, XAI bridges the gap between technology and human comprehension, creating a work environment where trust and teamwork naturally flourish.