Judgment in Knowledge Sharing: Human vs. AI Roles

Articles May 2, 2026 9:00:00 AM Seth Mattison 14 min read

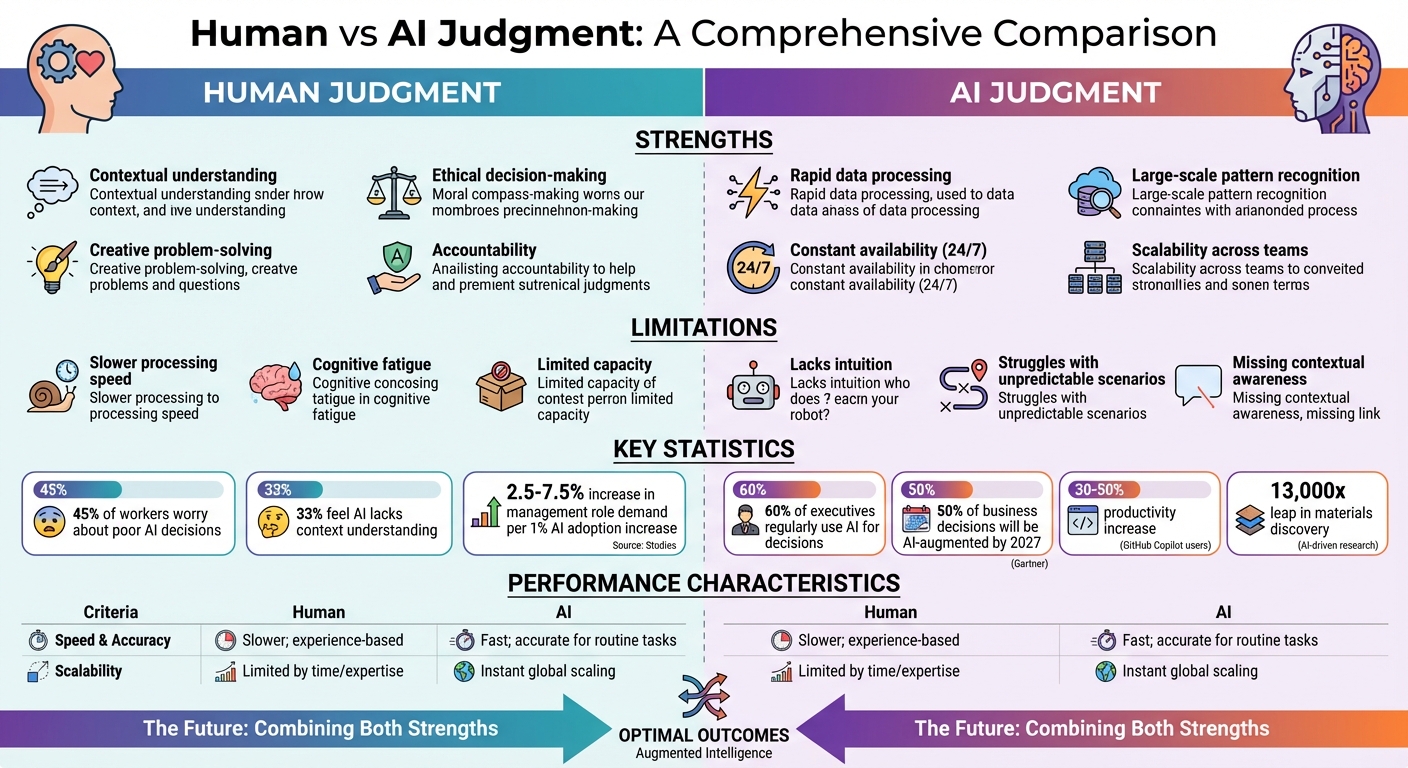

AI and humans each bring unique strengths to decision-making and knowledge sharing. AI processes massive datasets fast, identifies patterns, and supports large-scale operations. On the other hand, humans excel at providing context, ethical reasoning, and accountability. The challenge lies in combining these strengths effectively.

Key takeaways:

- AI's strengths: Speed, scalability, and pattern recognition. However, it lacks context, intuition, and accountability.

- Human strengths: Contextual understanding, ethical oversight, and creative problem-solving. Slower but more nuanced in high-stakes decisions.

- Balance is critical: Companies integrating AI with human judgment see increased demand for roles emphasizing decision-making and interpersonal skills.

AI is a tool, but human oversight ensures its outputs are useful and safe. The future belongs to organizations that leverage both effectively, preserving human skills like critical thinking while utilizing AI for efficiency.

1. Human Judgment

Human judgment is shaped by a wealth of real-world experience. When professionals share their insights, they’re not just passing along facts - they’re offering context enriched by relationships and lived experiences [7]. This depth allows them to provide practical examples that highlight the real impact of human judgment.

One of the strengths of human judgment is its ability to catch what AI often overlooks - those unasked questions, hidden assumptions, and blind spots that lie beyond the scope of an algorithm’s training [2][3]. Take, for instance, the case of Syntiant in March 2026. A group of 2,000 experts used CrowdSmart's "Generative Collective Intelligence" framework to evaluate the startup, awarding it a 91% score. But beyond the numbers, human reasoning uncovered something crucial: Syntiant's ultra-low-power AI chips had potential far beyond their original niche. This insight led to a shift in the company’s business model, securing a Series A investment from Intel within 100 days and pushing its valuation close to $1 billion [6].

Human judgment doesn’t just identify opportunities - it also acts as a safeguard. Think of it as a quality control system, a mechanism that stops flawed AI outputs from being accepted without question. This role is critical, especially when 33% of professionals express concerns that AI doesn’t fully grasp the complexity or context of their work [4]. Humans bring ethical, social, and strategic perspectives into the mix, asking "why" and testing cause-and-effect relationships. In contrast, AI largely relies on statistical patterns [5]. However, relying too heavily on AI can lead to issues like cognitive atrophy, where critical thinking skills weaken, or intellectual convergence, which stifles innovation. To counteract these risks, companies like EY are investing heavily in human-centered AI strategies - spending $1.4 billion on upskilling programs for their 400,000 employees to ensure that human judgment remains central to their approach [2].

While humans can’t compete with AI’s speed, they provide essential context and ethical oversight. Human judgment adds value in ways AI cannot: shouldering the responsibility for decisions, connecting unrelated ideas (like using butterfly wing structures to improve drug delivery), and making tough calls when the stakes are high and outcomes are uncertain [2]. As Seth Mattison points out, in a world increasingly shaped by AI, cultivating these uniquely human abilities is key to staying ahead. These examples make it clear: AI may be fast, but when it comes to nuanced, high-stakes decisions, humans remain indispensable.

2. AI Judgment

AI doesn't truly exercise judgment; instead, it generates outputs based on patterns and probabilities. As Tom Kehner explains:

"Large language models do not judge. But, they do generate language that resembles judgment" [7].

This means AI can deliver responses that sound confident, even though they lack genuine deliberation. This phenomenon, called "epistemia", creates a risk where AI's outputs might be passively accepted as valid evaluations [7].

That said, AI's strength lies in its ability to process and analyze data at incredible speed and scale. It can synthesize massive datasets almost instantly, which has driven its widespread adoption. For instance, 60% of executives now regularly rely on AI for business decisions, and Gartner predicts that by 2027, 50% of business decisions will be augmented or automated by AI agents [8]. The efficiency gains are hard to ignore: AI has enabled a 13,000x leap in materials discovery [6], and engineering teams using tools like GitHub Copilot report productivity increases of 30% to 50% for routine development tasks [10].

However, AI's approach to judgment is fundamentally different from human reasoning. While humans seek to understand causality - the "why" behind patterns - AI focuses solely on correlation. It can identify patterns but lacks the ability to test or understand cause-and-effect relationships [7]. Microsoft CEO Satya Nadella highlighted this shift, saying:

"2026 is about the 'scaffolding' above the models, not the models themselves" [6].

Nadella's point emphasizes the importance of "cognitive scaffolding", where organizations combine AI's capabilities with human expertise to refine and contextualize AI-generated outputs.

But there's a catch: AI generates options faster than most organizations can evaluate them. As a result, the competitive edge is shifting. It's no longer just about surfacing intelligence but about constraining it - setting clear objectives and risk boundaries [9]. A leader at Liberty Mutual Insurance put it this way:

"The moment AI enters the workflow, the real question isn't 'What does the model say?' It's 'Who gets to disagree with it, and how fast?'" [8].

To address this challenge, companies are adopting "Guardian Agents." These specialized AI systems are designed to oversee and limit the autonomy of other AI agents, ensuring decisions stay within safe and defined parameters [8].

Here's a breakdown of AI's capabilities and their limits:

| AI Capability | Constraint/Limit | Performance Characteristic |

|---|---|---|

| Information Synthesis | Lacks lived experience and grounding | Processes vast datasets almost instantly |

| Pattern Recognition | Risks "intellectual convergence" | Operates with unmatched speed and scale |

| Option Generation | Cannot bear accountability | Produces options faster than humans can absorb |

| Linguistic Plausibility | Lacks metacognition (self-awareness) | Delivers confident, fluent responses |

Advantages and Disadvantages

Human vs AI Judgment: Strengths, Limitations and Performance Comparison

When comparing human and AI judgment, each brings its own strengths and challenges to the table, especially in the context of knowledge sharing and decision-making.

Human judgment shines in areas requiring contextual understanding, ethical decision-making, and personal accountability. As Ravikiran Kalluri, Assistant Teaching Professor at Northeastern University, points out:

"Leaders aren't paid because they can access information; they're paid to make decisions when the stakes are real and the outcomes are uncertain" [2].

That said, humans face limitations like slower decision-making, cognitive fatigue, and restricted capacity to handle large-scale tasks.

AI judgment, on the other hand, excels at processing vast amounts of data quickly and recognizing patterns at a scale humans can't match. It operates tirelessly and can support entire organizations simultaneously [2]. However, AI struggles with intuition and often falters in unpredictable or nuanced situations, as it may overlook critical context. Drew Garner, SVP of AI & Data Platform at Smartsheet, emphasizes this point:

"The human in the loop isn't optional. It's what makes the difference between AI that's confidently wrong and AI that's genuinely useful" [1].

Interestingly, data highlights a growing concern among workers using AI: 45% worry about "poor decisions or mistakes" stemming from AI recommendations, and 33% feel AI lacks the ability to understand complex or context-specific projects [1]. On the flip side, as AI adoption increases, so does the demand for management roles that require strong judgment and interpersonal skills - rising by 2.5% to 7.5% for every percentage point increase in AI usage [2].

| Criteria | Human Judgment | AI Judgment |

|---|---|---|

| Strengths | Contextual understanding, ethical decision-making, creative problem-solving, and accountability [2][1]. | Rapid data processing, large-scale pattern recognition, and constant availability [2]. |

| Limitations | Slower processing, cognitive fatigue, and limited capacity. | Lacks intuition and struggles with unpredictable or nuanced scenarios [2][1]. |

| Speed & Accuracy | Slower; relies on experience and nuanced discernment [1]. | Fast; highly accurate for routine tasks but prone to errors in complex contexts [2][1]. |

| Scalability | Limited by individual expertise and time. | Instantly scales knowledge across global teams [2]. |

The key isn't about choosing one over the other but understanding how to combine human and AI strengths effectively. This synergy can pave the way for smarter decisions and more impactful leadership strategies.

Conclusion

The future of knowledge sharing isn't about choosing between human judgment and AI - it’s about figuring out how to combine their strengths. AI can process enormous amounts of data and identify patterns at a scale that humans can't match. Meanwhile, humans bring essential qualities like contextual understanding, ethical reasoning, and accountability - things machines simply can't replicate.

To make the most of these strengths, organizations need to strike the right balance. This means determining where AI can add value and where human judgment is non-negotiable. For instance, implementing deliberate practices like "human thinking sprints" or AI-free problem-solving sessions can help maintain cognitive sharpness and critical thinking skills [2]. Additionally, capturing the wisdom of experienced professionals ensures that the nuanced judgment they provide can help train and refine AI systems [1]. This blend of human and AI contributions forms the foundation for long-term success.

Organizations that succeed will build what’s known as a Human Moat - leveraging uniquely human skills to create differentiation, trust, and consistent performance. This involves developing "meta-expertise", which includes managing multiple AI tools, validating their outputs, and connecting insights across diverse fields [2]. As AI adoption grows, roles that emphasize strong judgment and interpersonal skills will only become more valuable [2]. The real edge lies in knowing which questions to ask, when to challenge AI-driven recommendations, and how to navigate the uncertainties that only human judgment can address.

FAQs

When should humans override AI recommendations?

Humans need to step in and override AI recommendations when ethical dilemmas, contextual nuances, or a deeper understanding of a situation come into play. This is especially true in matters involving transparency, trust, or intricate human relationships - areas where AI might struggle to grasp the full scope or consequences of its suggestions.

How can teams prevent overreliance on AI at work?

Teams can steer clear of relying too heavily on AI by emphasizing the importance of human judgment as a critical counterpart to machine intelligence. To achieve this, organizations can focus on several key practices:

- Build AI literacy: Develop clear policies and provide cross-functional training to help team members understand AI's capabilities and limitations.

- Regular trust calibration: Encourage teams to periodically assess their reliance on AI systems to ensure they strike the right balance between trust and skepticism.

- Encourage transparency and open dialogue: Foster an environment where employees feel safe questioning AI outputs without fear of judgment, promoting psychological safety.

- Highlight human oversight: Leaders should emphasize the essential role of human input, particularly for tasks that involve ethical considerations or require nuanced decision-making.

By doing so, teams can ensure that AI serves as a tool to enhance, not replace, critical thinking and informed decision-making.

What does “Human Moat” mean in an AI-driven workplace?

In an AI-powered work environment, the term “Human Moat” highlights the unique strengths humans bring to the table - things like authority, accountability, and the ability to make nuanced, context-driven decisions. These qualities are tough for AI to mimic, giving humans a distinct advantage in areas where machines just can't measure up.