Gen Z vs. Boomers: Trust Challenges in AI Era

Articles Apr 5, 2026 9:00:00 AM Seth Mattison 17 min read

AI is reshaping workplaces, but trust in this technology varies greatly between generations.

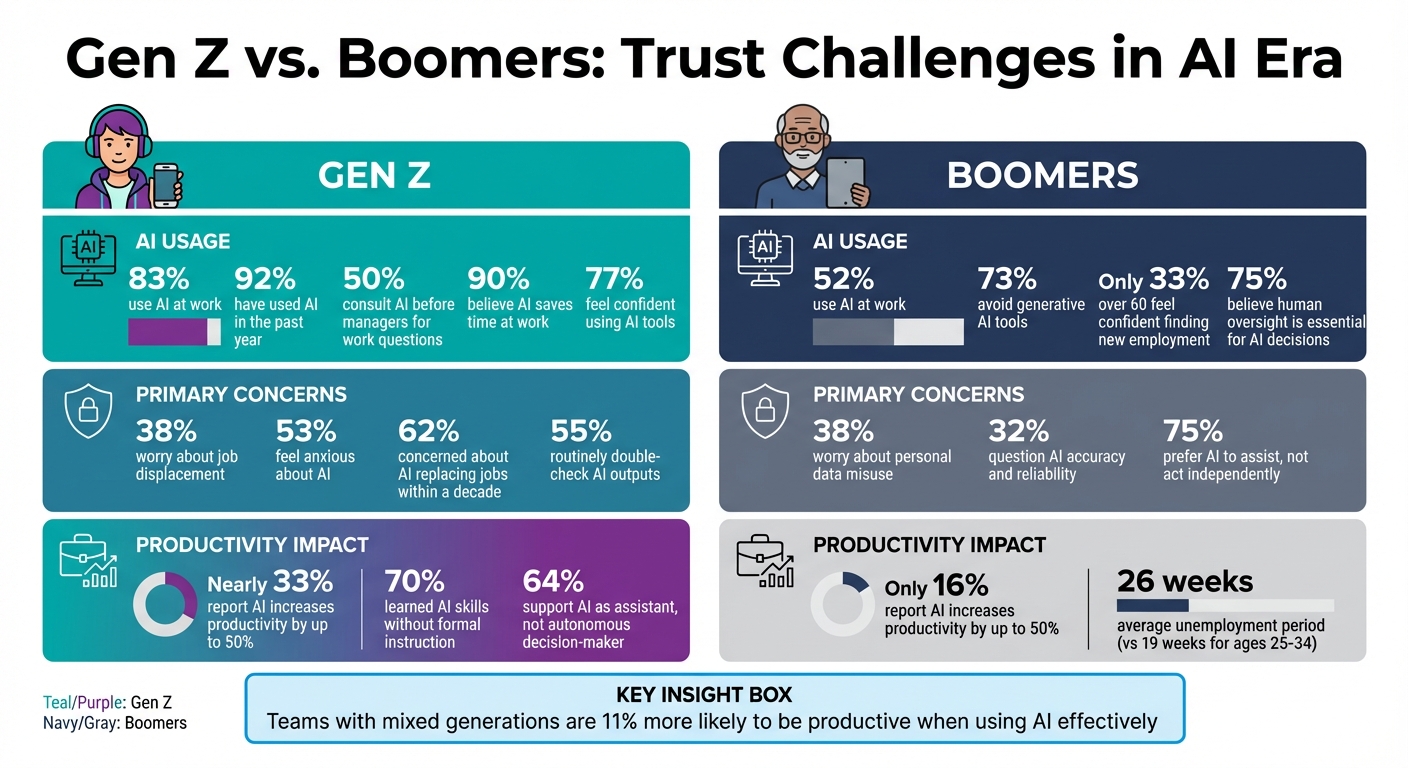

- Gen Z: 83% use AI at work, with 50% consulting tools like ChatGPT before managers. They view AI as a key skill but worry about job displacement (38%).

- Boomers: Only 52% use AI, with 73% avoiding generative tools. Concerns focus on privacy (38%) and accuracy (32%), valuing human judgment over automation.

The generational gap isn't just about usage - it's about trust and approach. Gen Z sees AI as a collaborator, while Boomers demand transparency and accountability. Bridging these differences through mentorship and targeted training can help organizations thrive in an AI-driven world.

Gen Z vs Boomers AI Trust and Usage Statistics Comparison

How Gen Z Views AI and Trust

Growing Up Digital

For Gen Z, AI has always been part of the backdrop of their lives. A staggering 92% of American Gen Zers have used AI in the past year, and nearly half interact with AI tools daily[3]. This immersion has created a natural dependency on digital solutions, making AI feel as intuitive as breathing.

Unlike older generations who had to adjust to emerging technologies, Gen Z treats AI as an integral part of their lives. To them, it’s not just a search engine or tool - it’s more like the "operating system" that powers their day-to-day activities[1]. Whether it’s deciphering the tone of an email from their manager or navigating social dynamics in the workplace, they turn to AI for guidance. In fact, almost 50% of Gen Z respondents admit they’d rather consult AI for work-related questions than ask a colleague or manager[1].

This early familiarity with AI has set the stage for them to use it as a key resource in their professional lives.

AI Skills as Professional Assets

For Gen Z, knowing how to use AI isn’t just helpful - it’s critical for success in the modern workplace. About 77% of Gen Zers feel confident in their ability to use AI tools[6], and 70% have taken it upon themselves to learn these skills without formal instruction[5]. They experiment with tools like ChatGPT to optimize workflows, whether that’s debugging code or drafting client proposals. Take Shivam Nangia, for example: during a co-op internship in 2024, he automated data downloads and dashboard updates, demonstrating how AI can streamline repetitive tasks[4].

Interestingly, while 61% of Gen Z believe AI’s benefits outweigh its risks, 62% are still concerned about the possibility of AI replacing jobs within the next decade[3][6]. This doesn’t deter them, though - instead, it motivates them to sharpen their AI skills, ensuring they remain valuable in a workplace increasingly shaped by technology.

Their hands-on approach to learning AI reflects not just adaptability but a calculated effort to stay ahead in their careers.

AI as a Tool to Enhance Work

Armed with their self-taught expertise, Gen Z uses AI to boost efficiency and tackle more challenging tasks. An overwhelming 90% of Gen Z employees believe AI helps them save time at work[5], allowing them to focus on creative and strategic responsibilities. In fact, nearly a third report that AI increases their productivity by up to 50%, a stark contrast to only 16% of Baby Boomers who say the same[6].

From automating repetitive tasks like data entry and drafting emails to addressing more sensitive challenges like resolving workplace conflicts or drafting performance reviews, Gen Z is finding new ways to integrate AI into their workflows. About 33% of Gen Z workers rely on AI for these more nuanced and complex responsibilities[6].

At the same time, they remain cautious. Over half (55%) of Gen Z routinely double-check AI outputs[6]. They see AI as a collaborator - one that enhances their abilities but still requires human oversight. This balanced perspective highlights their trust in AI as a tool that complements, rather than replaces, human judgment.

sbb-itb-9ceb23a

How Boomers View AI and Trust

Concerns About Job Security

For Baby Boomers, the worry about employment isn't centered on AI taking over their jobs. Instead, it's about ageism shutting them out of opportunities. Only 33% of Americans over 60 feel confident they could find new employment. On top of that, older workers face longer periods of unemployment - 26 weeks on average compared to just 19 weeks for those aged 25-34. Chief Economist Daniel Zhao explains that this gap stems more from perceived exclusion than from fears of AI automation[8]. The real concern for Boomers is being left behind in workplaces increasingly shaped by AI, due to stereotypes about their ability to adapt.

But job security isn't the only issue. Boomers also have serious questions about how AI handles their personal information.

Privacy and Transparency Issues

Boomers approach AI with a cautious mindset, shaped by years of dealing with internet scams and phishing attempts. A striking 73% of Boomers steer clear of generative AI tools. Among them, 38% are worried about their personal data being misused, while 32% are skeptical about the accuracy and origins of AI-generated information[2].

This distrust goes beyond concerns over data privacy. Boomers are particularly uneasy about deepfakes, synthetic voices, and fabricated facts - the murky areas of AI that blur the line between truth and deception[2].

"Older consumers, meanwhile, worry about deception - being misled by AI-generated content, manipulated by synthetic media, or simply unable to discern real from fake."[2]

Having seen technology evolve over decades, Boomers demand transparency and accountability before placing trust in AI systems.

Valuing Human Judgment

Beyond privacy and security, Boomers strongly believe in the importance of human judgment. Their skepticism toward AI is rooted in a key idea: AI lacks accountability. Joe Amditis, Associate Director of Operations at the Center for Cooperative Media at Montclair State University, puts it this way:

"AI tools should not be entrusted with roles requiring accountability... if they can't be punished or held accountable, then they don't need to be in charge of decisions that impact people's lives and livelihoods."[4]

Many Boomers, especially those in leadership positions, argue that AI should serve as a tool to assist, not replace, human decision-making. Seventy-five percent of Boomers believe that decisions affecting people's lives should always involve human oversight[9]. They see experience as something that algorithms simply can't replicate[1].

These perspectives highlight the importance of creating leadership strategies that address generational differences in attitudes toward AI and build trust across the board.

Comparing Trust Challenges Between Generations

Trust Factors Comparison

The trust gap between Gen Z and Boomers goes beyond age - it’s rooted in their differing relationships with technology. Sam Altman, CEO of OpenAI, summed it up well:

"Older people use ChatGPT as a Google replacement... people in college use it as an operating system" [1].

This divide influences everything from how they approach work to their deeper concerns about AI in the workplace. While Gen Z embraces AI with enthusiasm, only 52% of Baby Boomers actively use it [1], and 73% of Boomers report not using generative AI at all [2]. However, even among Gen Z, 53% report feeling anxious about AI [1].

The reasons behind these trust issues vary significantly between the generations. For Gen Z, the main concern is economic - 38% fear that AI will replace entry-level roles and internships, potentially derailing their career paths before they even begin [2]. Boomers, on the other hand, focus on accuracy and privacy, with 38% worried about personal data misuse and 32% questioning the reliability of AI-generated information [2].

Workplace habits also highlight these generational differences. Nearly half of Gen Z workers prefer consulting AI for work-related questions rather than seeking advice from a manager [1]. Bob Hutchins, AI Strategist and CEO of Human Voice Media, explains:

"Digital natives will trust the screen more than trusting the feeling, the understanding and the context of a room" [1].

In contrast, Boomers lean toward human interaction, valuing face-to-face communication and leadership input for decision-making.

Here’s a snapshot of how these trust factors differ:

| Trust Factor | Gen Z Perspective | Boomer Perspective |

|---|---|---|

| Comfort with AI Adoption | High; 83% actively use AI at work, viewing it as a necessary skill [1]. | Low; only 52% actively use AI, and most avoid generative AI altogether [1][2]. |

| Primary Trust Barrier | Economic: 38% fear job displacement and career setbacks [2]. | Accuracy and privacy: 38% worry about data misuse, 32% question AI reliability [2]. |

| Privacy and Security Views | Generally at ease with digital integration [1]. | Strong concerns about personal data misuse [2]. |

| Workplace Reliance | 50% prefer AI for work queries over consulting managers [1]. | Favor human-driven collaboration and oversight [1]. |

| Decision-Making Preference | 64% support AI as an assistant, not an autonomous decision-maker [9]. | 75% prefer AI to assist but not act independently [9]. |

Recognizing these differences is key for leaders aiming to bridge the generational trust divide.

How Leaders Can Bridge the Trust Gap

Reverse Mentorship Programs

Reverse mentorship brings Gen Z employees and Boomers together, creating opportunities for mutual learning. Younger workers share their expertise in AI, social selling, and data analytics, while their senior counterparts offer insights into relationship building and negotiation. The impact of this collaboration is clear: 62% of Gen Z employees actively help senior colleagues upskill in AI, 72% of younger workers report their coaching improves team productivity, and 77% of directors say Gen Z's AI knowledge enhances departmental performance [11].

Steve Cox, CEO of Clari and Salesloft, highlights the synergy of this approach:

"If you took the best parts of relationship building... and then you took all the benefit of AI technology... what you'd end up with is an even better result than either generation could deliver on their own" [10].

To make reverse mentorship successful, it’s essential to approach it as a shared effort rather than a directive from the top. Cox suggests a "stair-step approach", where cross-generational champions work together to drive AI adoption. This resonates with the 79% of sales professionals who want to be paired with someone from a different generation [10].

Alongside mentorship, tailored training programs can help Boomers overcome their hesitation toward technology.

AI Training for Boomers

Boomers often need more than access to AI tools - they need hands-on, practical training to build confidence and reduce reluctance [7]. Many Boomers avoid using generative AI altogether, so effective programs should focus on real-world applications that enhance critical thinking and creativity [7]. Instead of framing AI as just a tool for speed [12], training should emphasize how it can handle routine tasks, allowing more time for strategic work and personal connection.

Leaders should also understand that banning AI outright is counterproductive. One in six Gen Z workers admits to using AI even when explicitly told not to [7]. Instead of prohibitions, clear and practical guidelines on how and when to use AI can foster transparency and trust across generations.

While AI training is essential, leaders must also encourage the development of distinctly human skills that complement technology.

Building the Human Moat

Focusing on what makes humans irreplaceable is a powerful way to bridge generational trust gaps. Seth Mattison’s Human Moat framework emphasizes skills like judgment, empathy, and leadership - qualities that AI cannot replicate. This approach not only strengthens team dynamics but also builds trust in an AI-driven world.

For Gen Z, who often worry that AI could lead to laziness or diminished intelligence [7], the Human Moat highlights how AI can free up time for meaningful work and deeper connections [7]. For Boomers, who may be more concerned about accuracy and privacy, it underscores the importance of human judgment in evaluating AI outputs [2].

Surveys reveal that most employees see AI as a tool to close experience gaps and promote knowledge sharing [10][12]. By helping teams develop a Human Moat, leaders can foster an environment where AI is seen as a partner in human excellence rather than a replacement for it.

Conclusion

The generational trust gap in the AI era presents a chance to bring together diverse strengths. As earlier data highlights, younger workers are far more enthusiastic about AI compared to their senior colleagues. Yet, teams with a mix of generations are 11% more likely to be productive when using AI effectively [1]. This shows that differences in approach can become powerful when managed thoughtfully.

The real edge now lies in the qualities that AI can't duplicate. This is what some call the "Human Moat" - skills like judgment, contextual awareness, and relationship-building, which grow stronger with experience. Jeri Doris, Chief People Officer at Justworks, puts it clearly:

"I've seen brilliant people who can do these amazing things with AI, but where they fall down is with human discernment and leadership - and that just comes with reps and experience" [1].

This evolution from raw data to human judgment opens the door for leadership that bridges generational gaps. Tools like reverse mentorship and customized AI training can transform these differences into a competitive strength. Leaders who embrace guidance over restriction will succeed - even when Gen Z bypasses the rules. They'll create workflows that reintroduce critical thinking into the process and help teams see that AI isn’t replacing human value - it’s enhancing it.

Looking ahead, leaders must focus on building partnerships across generations that strengthen trust and boost performance. Success won’t come from having the most advanced AI tools but from fostering the strongest Human Moat. Organizations that combine agility with experience will thrive, creating spaces where collaboration replaces competition. In a world overflowing with intelligence, it’s the distinctly human skills - sharpened through generational collaboration - that will set companies apart.

FAQs

How can leaders build trust in AI across generations?

Leaders can build trust in AI by focusing on transparency, actively including employees in the adoption process, and providing consistent training opportunities. According to Seth Mattison, teams with high levels of trust excel when they prioritize open communication and collaboration, particularly when addressing sensitive topics such as ethics.

Generational attitudes toward AI also play a role. While Gen Z tends to be more open to embracing AI, Boomers often approach it with greater caution. Adapting strategies to align with these differing viewpoints can help bridge gaps and foster trust across the entire workforce.

What are safe rules for using AI at work without risking privacy?

To use AI responsibly in the workplace while safeguarding privacy, prioritize transparency, ethical data practices, and clear guidelines. Make sure AI systems clearly communicate how they use data, involve employees in the decision-making process when adopting new tools, and offer training on responsible AI usage.

It’s also important to set clear policies that outline acceptable use, address potential automation bias, and emphasize the importance of human oversight. By doing so, you can ensure compliance with privacy laws and foster trust among team members.

How do teams keep human judgment in AI-assisted decisions?

Teams can maintain the essential role of human judgment in AI-assisted decisions by focusing on transparency, encouraging open dialogue, and investing in training. Involving employees in the process of adopting AI fosters trust and ensures that human oversight remains central. Offering training equips team members with the skills to critically evaluate AI-generated outputs, reducing the risk of overdependence. By combining AI’s capabilities with human insight, organizations can position AI as a supportive tool that enhances, rather than replaces, human decision-making.