Judgment Skills for AI-Driven Decision-Making

Articles May 6, 2026 9:00:00 AM Seth Mattison 20 min read

In the age of AI, human judgment remains indispensable. While AI excels at processing data and identifying patterns, it struggles with ethical reasoning, contextual understanding, and anticipating long-term impacts. Leaders must balance AI's capabilities with human insight to make better decisions.

Key Takeaways:

- AI's Role: Handles data processing and pattern recognition but lacks the ability to weigh trade-offs or consider ethical dimensions.

- Human Judgment: Essential for interpreting AI outputs, managing uncertainty, and aligning decisions with organizational goals.

- Common Challenges: Overwhelming data, mistrust in AI outputs, and the risk of over-reliance on automation.

- Practical Strategies:

- Use a decision triage system to allocate tasks between AI and humans.

- Document decisions to build a "negative knowledge" library for future learning.

- Focus on developing judgment skills in leadership and junior roles.

AI should assist decision-making, not replace it. Success lies in combining human intuition with AI insights, ensuring decisions are thoughtful, ethical, and impactful.

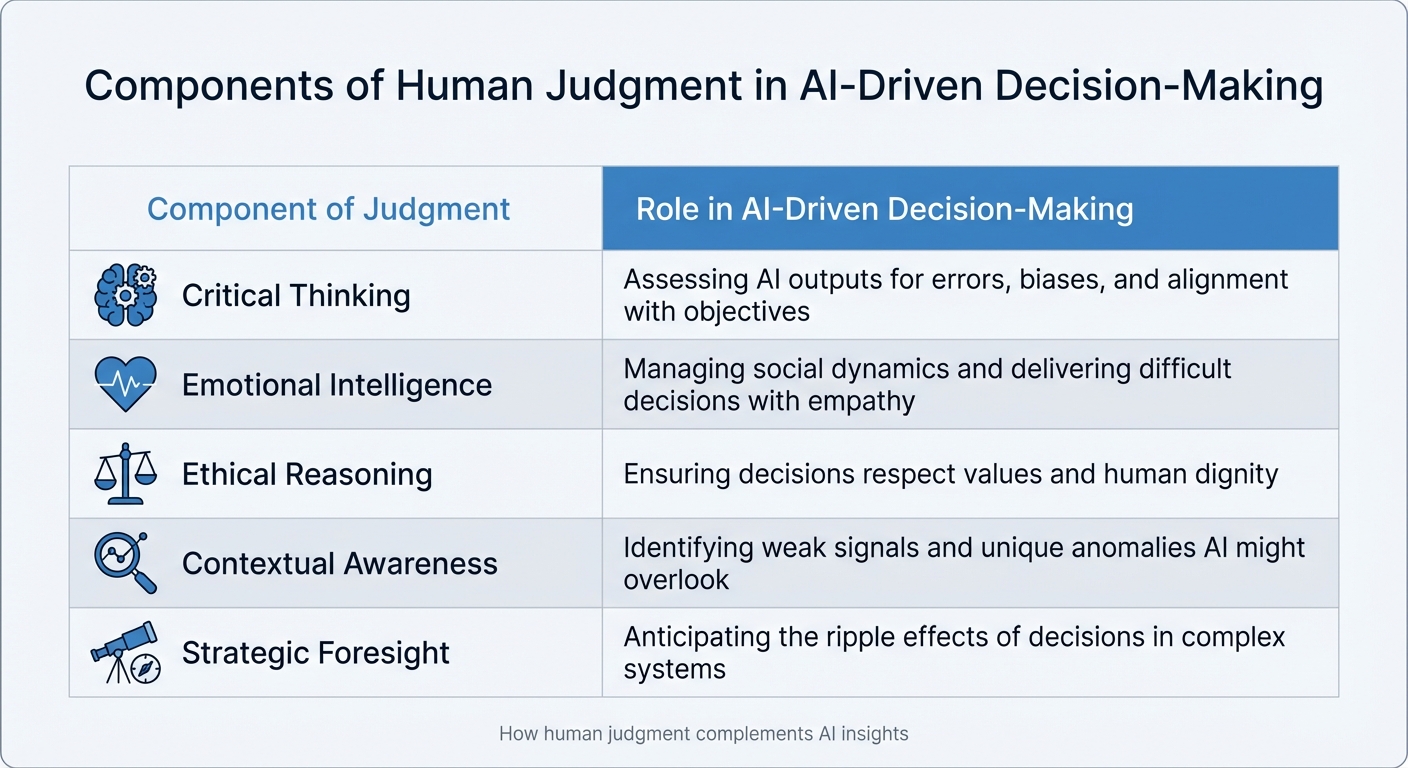

Core Components of Judgment in AI-Driven Decision-Making

Components of Human Judgment in AI-Driven Decision-Making

Building on the earlier discussion about the importance of human judgment in AI, let’s break down its key elements. Effective judgment combines various abilities to transform AI’s raw data into sound decisions. By understanding these components, leaders can better direct their efforts and recognize where AI still requires human oversight.

Critical Thinking and Analytical Rigor

AI outputs aren’t always reliable - they may fabricate information, carry biases from their training data, or lack necessary context. David S. Duncan, a Partner at Disruptive Edge, outlines five types of judgment that leaders must develop: evaluative judgment (determining if AI results align with goals), tradeoff judgment (balancing competing priorities when no option is ideal), anticipatory judgment (predicting second-order effects), contextual judgment (deciding when exceptions to general rules are appropriate), and editorial judgment (ensuring outputs fit the organization’s context) [3].

While AI excels at identifying patterns in data, humans bring critical judgment by factoring in ethical, contextual, and strategic dimensions that numbers alone can’t address [4]. These analytical skills are the foundation for layering in emotional intelligence, which adds depth to decision-making.

Emotional Intelligence and Ethical Considerations

AI doesn’t feel regret or understand the human consequences of a decision, no matter how technically accurate it is. This is where emotional intelligence becomes a moral compass, helping leaders grasp the broader stakes [5]. AI can manage tasks that are repetitive or hazardous, but humans must provide what’s called the "Fifth D": discernment. This involves recognizing when decisions should prioritize values, human dignity, or long-term impact over mere efficiency [5].

Empathy plays a key role here, enabling leaders to navigate social complexities and communicate tough decisions in ways that maintain trust and morale [4]. Emotional intelligence ensures decisions don’t just work on paper but resonate with the human realities they affect.

Contextual Awareness and Big-Picture Thinking

AI’s strength lies in analyzing historical data for patterns, but groundbreaking opportunities often come from weak signals that diverge from past trends [2]. That’s why contextual judgment - knowing when to follow general rules versus when to adapt to unique circumstances - is essential [3].

Although AI can synthesize vast amounts of information, humans are crucial for aligning decisions with long-term goals, a critical element in maintaining a Human Moat. AI might identify correlations that seem significant but lack true causation. Contextual awareness allows leaders to differentiate between coincidence and meaningful cause-and-effect relationships, leading to decisions with lasting impact [2].

"The tools amplified existing judgment rather than compensating for its absence." - David S. Duncan, Partner, Disruptive Edge [3]

The table below highlights how these judgment elements complement AI insights:

| Component of Judgment | Role in AI-Driven Decision-Making |

|---|---|

| Critical Thinking | Assessing AI outputs for errors, biases, and alignment with objectives [2][4]. |

| Emotional Intelligence | Managing social dynamics and delivering difficult decisions with empathy [4]. |

| Ethical Reasoning | Ensuring decisions respect values and human dignity [5][4]. |

| Contextual Awareness | Identifying weak signals and unique anomalies AI might overlook [2][3]. |

| Strategic Foresight | Anticipating the ripple effects of decisions in complex systems [4]. |

Strategies to Strengthen Judgment in AI-Driven Systems

Balancing AI Insights with Human Intuition

Effective collaboration between AI and humans hinges on a concept called decision triage, which organizes decisions into three categories based on their stability and complexity.

- Tier A decisions: These are stable and predictable. AI can handle them autonomously within well-defined limits.

- Tier B decisions: These are more ambiguous and evolving. Here, AI highlights patterns and inconsistencies, while humans focus on framing questions and setting boundaries.

- Tier C decisions: These involve novel, high-stakes scenarios. Humans take the lead, using AI as a tool for analysis and stress-testing [2].

This approach addresses a common issue: 72% of leaders hesitate to make decisions due to overwhelming data or a lack of trust in the information [6]. By defining when to rely on AI and when to trust human instincts, leaders can make quicker decisions without compromising quality.

One practical method is to set aside "experimental budgets" - dedicating 5–10% of resources to instinct-driven projects. AI can assist with simulations, while streamlined approval processes and clear exit strategies keep risks manageable [2]. If leaders override AI recommendations, they should document their reasoning, evidence, and lessons learned. These records become valuable references for future decisions [2].

This triage naturally leads into scenario planning, a structured method to refine decision-making further.

Developing Scenario-Based Decision Frameworks

Scenario planning becomes more effective when you separate decisions into one-way doors and two-way doors.

- One-way doors: Irreversible decisions that demand thorough analysis and deliberation.

- Two-way doors: Reversible decisions that allow for quicker action, often with AI's assistance [6].

This approach, inspired by Amazon, helps leaders focus their energy where it matters most. For high-stakes, one-way decisions, AI can generate multiple "what-if" scenarios using Causal AI. This method not only identifies patterns but also uncovers the cause-and-effect relationships driving them [2].

For example, in March 2026, an AI-enhanced leadership workspace at Progyny cut decision cycles by 30–50% and reduced executive prep time by 40–60% [1]. AI's role here was to speed up the "Sense → Think → Decide → Act" process, allowing leaders to concentrate on defining problems rather than gathering data. To ensure balance, organizations should set clear escalation triggers, such as reputational risks or unforeseen ripple effects, to guide when human intervention is necessary [8].

While scenario planning optimizes technical decisions, the next step ensures AI complements human leadership instead of overshadowing it.

Using AI for Enhanced Perspective, Not Replacement

AI should function as a reliable assistant, not the ultimate decision-maker. It excels at processing data and testing ideas but still requires human oversight for situations involving moral complexity or unprecedented challenges [7][2]. This distinction is critical - 95% of generative AI projects fail to deliver meaningful results when companies neglect to redesign workflows and decision-making processes [1].

"The moment AI enters the workflow, the real question isn't 'What does the model say?' It's 'Who gets to disagree with it, and how fast?'" - Leader at Liberty Mutual Insurance [6]

DBS Bank illustrates this principle with its "PURE" framework (Purposeful, Unsurprising, Respectful, Explainable). This framework underpins their iGrow AI platform, which supports transparent, data-driven decisions about employee development while maintaining oversight from senior committees. The goal is to enhance decision-making without compromising integrity [6].

This approach aligns with a growing trend: 60% of executives now use AI regularly to assist their decisions [6]. However, AI's role should be to elevate human thinking - not to remove the responsibility for deciding which path forward is worth pursuing.

Building a Human Moat Through Superior Judgment Practices

What Is the Human Moat?

The Human Moat refers to a competitive edge rooted in distinctly human abilities that AI simply can't replicate - things like ethical judgment, intuition, and understanding context. As AI takes over tasks like data analysis and information processing, the real advantage lies in how humans can interpret and apply that information with wisdom [4].

Here’s why this matters: AI is great at spotting patterns and making predictions, but it struggles with the kind of nuanced decision-making that requires weighing ethics, social dynamics, and long-term strategy. As Harvard Business School professors Marco Iansiti and Karim Lakhani explain, "AI transforms business, but humans define its purpose" [4].

David S. Duncan highlights this challenge in what he calls the Judgment Paradox: while AI increases the need for solid judgment, it also reduces the opportunities to develop it. Traditionally, entry-level tasks - often messy and repetitive - served as training grounds for building judgment. But now that AI handles these tasks, organizations risk losing a critical skill [3].

A study from September 2025 by Harvard Business School and UC Berkeley researchers underscores this issue. They examined 640 small business entrepreneurs in Kenya and found that AI-driven advice only improved outcomes for those who already had strong business judgment. Others, who followed generic advice like "increase advertising", saw an 8% decline in performance [9]. As Duncan puts it, "The tools amplified existing judgment rather than compensating for its absence" [3].

Grasping the concept of the Human Moat is crucial for weaving these uniquely human traits into leadership and decision-making.

Embedding Judgment as a Core Leadership Discipline

To make the Human Moat a reality, organizations need to integrate judgment into their leadership models, ensuring it drives faster and smarter decisions [1].

One way to do this is by utilizing a three-tier decision triage framework. This system helps identify where human judgment is most valuable [2]. Additionally, companies can create "gamble budgets" - dedicating 5–10% of resources to instinct-driven, high-conviction opportunities that don’t rely on traditional data. These initiatives, backed by simplified approval processes and clear exit strategies, leave room for bold, innovative ideas [2].

When leaders override AI recommendations, they should provide a one-page rationale explaining their hypothesis, evidence, and what can be learned. This practice builds a "negative knowledge" library, which helps refine decision-making over time [2].

Lastly, it’s vital to redesign junior roles to focus on developing judgment. Since AI now handles many foundational tasks, organizations should use tools like case studies, simulations, and structured reflection to teach pattern recognition and decision-making skills [3].

"The unit of change in the AI era isn't the job. It's the judgment required for the job." - Barry O'Reilly [1]

Measuring and Sustaining Judgment Effectiveness

Key Metrics for Evaluating Judgment in Decision-Making

One of the most important metrics for evaluating decision-making isn't just about the AI model's performance - it’s about how well the human-AI team can identify when the system fails. The focus shifts from asking, "How good is the model?" to "How effectively can our team recognize and respond to its failures?" [12].

To achieve this, organizations should monitor four key metric categories:

- Outcome metrics: These assess whether AI involvement improves decision-making outcomes. They look at team performance and how effectively errors are identified and corrected.

- Reliance metrics: These track patterns like "accept-on-wrong" (agreeing with incorrect AI outputs) or "changed-to-wrong" (when human judgment is altered incorrectly based on AI input).

- Safety signals: These measure governance issues, such as how often decisions are rolled back or near-misses occur.

- Learning metrics: These evaluate whether the team is improving its readiness to work with AI over time [12].

For example, in March 2025, a major bank rolled out a machine-learning fraud detection system to replace static rules. By closely tracking key performance indicators (KPIs), the bank discovered significant improvements: fraud-related financial losses dropped by 60%, false positives were reduced by 80%, and the system delivered a fivefold return on investment (ROI) within a year [13]. The real success wasn’t just in the AI’s accuracy but in how well human analysts adjusted their reliance on the system.

When it comes to AI-generated content, organizations should assess it based on accuracy, relevance, coherence, helpfulness, and user trust. A positive indicator of trust is achieving a response acceptance rate above 70% for AI-generated answers that require no modification [11].

These metrics provide the foundation for ongoing learning, ensuring that every decision becomes an opportunity to refine judgment.

Continuous Learning and Feedback Loops

Metrics are just the beginning - leaders also need systems that turn every decision into a chance to sharpen human judgment alongside AI insights. Developing judgment isn’t a one-time training event; it’s a continuous process. One way to approach this is by adopting a three-tier decision triage system:

- Tier A decisions: For stable, predictable scenarios, rely on automation.

- Tier B decisions: For ambiguous or evolving situations, use a mix of human and AI input.

- Tier C decisions: For novel, high-stakes issues, prioritize human judgment with AI acting as a support tool [2].

To support this, organizations should maintain decision logs that document all human-AI disagreements, including the reasoning behind each decision. When leaders override AI recommendations, they should provide a one-page rationale detailing their hypothesis, supporting evidence, and a learning plan. Over time, these logs become a valuable archive of case studies for improving judgment [2][6].

Additionally, track the outcomes of intuition-driven decisions and create "intelligent failure" reports to build a repository of lessons learned from mistakes [2]. Regularly ask diagnostic questions like: "Who is making the critical decisions, and who is just reviewing work?" or "Are we shielding people from ambiguity instead of encouraging them to engage with it?" [3].

Finally, adopt decision hygiene rituals - structured moments to pause and test assumptions before moving forward. For example, spend five minutes at the end of each day reviewing one major decision to uncover nuances that raw data or AI might have overlooked. This simple daily practice helps transform judgment into a measurable and disciplined skill [4][10].

Conclusion

AI doesn’t replace human judgment - it enhances it. The organizations that gain the most from AI are those that rethink how decisions are made, not just how tasks are automated. While 90% of organizations now use AI, fewer than 40% report seeing measurable financial results [1]. The key difference lies in treating AI as a tool to assist decision-making, not as the decision-maker itself [14].

What’s truly changing is the shift from "getting ready to decide" to focusing on the act of deciding. AI eliminates much of the effort required to gather and process information, allowing leaders to concentrate their energy on the critical moment of choice - when they must weigh trade-offs, interpret subtle signals, and commit to a path forward that data alone cannot validate [1].

"AI doesn't make leaders decisive. It removes the friction that keeps them indecisive." - Barry O'Reilly [1]

This evolution underscores the growing importance of strong judgment across an organization. While AI simplifies many entry-level tasks, it also raises the bar for high-level decision-making [3]. Leaders must intentionally craft workflows that allow junior team members to grapple with ambiguity and take ownership of outcomes, rather than simply reviewing AI-generated outputs. Pete Anevski, CEO of Progyny, captured this well when he shared his vision with his team in 2026:

"We're not using AI to reduce headcount. We're using it to amplify your human skills - to elevate, not eliminate your work." - Pete Anevski, CEO of Progyny [1]

In this new landscape, competitive advantage belongs to those who can combine human intuition with machine-driven insights [1]. Use AI for analysis, but reserve final decisions for situations that require complex, nuanced judgment. Build habits around decision-making, document instances where human overrides AI, and create feedback loops so every decision becomes a learning opportunity.

Judgment remains the defining factor in whether AI serves as a powerful ally or an expensive misstep. This balance is central to the Human Moat concept, reaffirming that human judgment is - and will always be - irreplaceable. Leaders who master this integration will turn intelligence into action and redefine what it means to lead in the age of AI.

FAQs

How do I know when to trust AI vs. override it?

AI excels at handling tasks like data processing, synthesis, and automation, making it an invaluable tool for streamlining operations. However, when it comes to areas that involve ethics, values, and long-term strategy, human judgment is irreplaceable. These are domains where nuance and moral reasoning play a critical role - something AI can't replicate.

To get the best of both worlds, structured workflows are key. By combining AI's efficiency with human oversight, organizations can ensure that decisions are both informed and responsible. That said, it's vital to remain alert to automation bias - the tendency to accept AI-generated outputs without proper scrutiny. Leaders must be prepared to step in and critically evaluate when needed.

Ultimately, effective leadership requires a thoughtful balance. Policies and frameworks should be in place to guide AI use, while keeping human input central to decision-making. This ensures that while AI handles the heavy lifting, the final call reflects human values and strategic foresight.

What’s a simple way to apply decision triage in my team?

To use decision triage effectively, start by sorting decisions based on their complexity and impact. Break them down into three groups:

- Decisions that can be quickly automated by AI.

- Decisions that need some level of human oversight alongside AI.

- Decisions that require in-depth human judgment and critical thinking.

By letting AI take over routine tasks, your team can concentrate on the more complex, high-impact decisions that benefit from human expertise. This approach streamlines processes and ensures your team’s focus remains on what truly matters.

How can we build judgment skills if AI automates entry-level work?

To keep judgment skills sharp as AI takes over entry-level tasks, it's important to focus on qualities that machines can't replicate - like critical thinking, creativity, and courage. Employees should be encouraged to dive into complex, unpredictable challenges that require hands-on learning and problem-solving. Leaders play a crucial role here by blending data-driven insights with human judgment and fostering an environment where curiosity and experimentation are valued. This approach ensures that decision-making stays balanced between AI outputs and human intuition, keeping judgment skills relevant and strong.