AI Role Transitions: Building Trust in Teams

Articles May 7, 2026 4:46:25 PM Seth Mattison 22 min read

AI is reshaping workplaces, but trust is the key to success.

When employees trust AI, productivity improves, decision-making speeds up, and collaboration thrives. Without trust, AI tools are ignored or misused. Leaders face challenges like skepticism, fear of job loss, and confusion about AI’s role. Addressing these concerns requires clear communication, ethical guidelines, and involving employees in the process.

Key Takeaways:

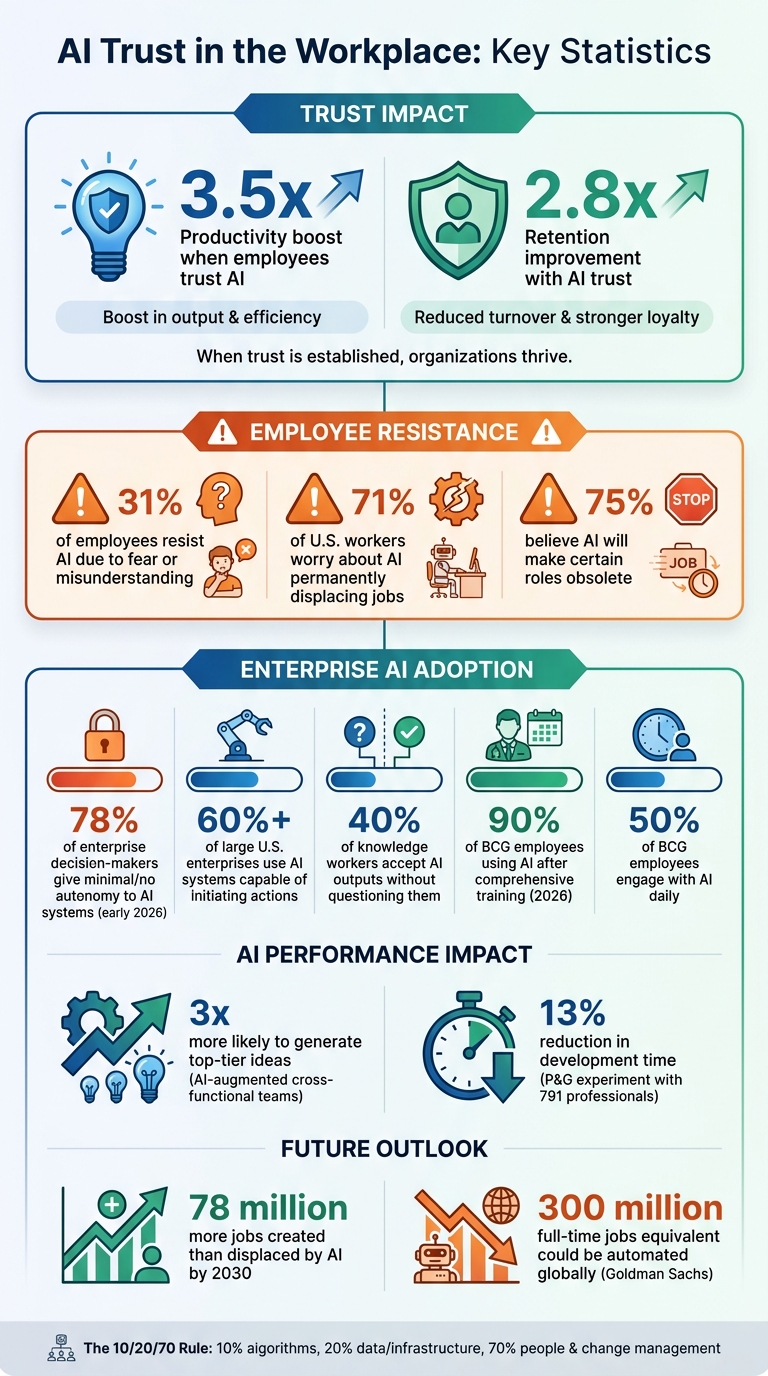

- Trust in AI boosts productivity by 3.5x and retention by 2.8x.

- 31% of employees resist AI due to fear or misunderstanding.

- Effective AI integration depends on transparency, accountability, and clear human-AI roles.

- Building trust involves psychological safety, shared ownership, and empathy.

To succeed, organizations must focus on people, ensuring AI complements human expertise rather than replacing it.

AI Trust Statistics: Impact on Productivity, Retention, and Employee Adoption

AI Horizons: Strategies for Building Trust in the Workplace

sbb-itb-9ceb23a

Addressing Employee Concerns

Tackling employee concerns is essential for narrowing the trust gap in cross-functional teams.

The Emotional Impact of AI Transitions

AI adoption often stirs significant anxiety among employees. A clear example of this occurred in late 2023 when Duolingo announced its shift to becoming "AI-first." The announcement triggered existential fears among employees, prompting CEO Luis von Ahn to clarify the company’s intentions and work to rebuild trust [3]. This reaction isn’t unique. Surveys show that 71% of U.S. workers worry about AI permanently displacing jobs, while 75% believe it will make certain roles obsolete [6]. These concerns stem from uncertainty, not irrational resistance. As Hart Brown, President of AI & Transformation at Saxum, explains:

Your organization's culture isn't rejecting AI as a technology. It's signaling you aren't trusted to integrate it [3].

The real issue isn’t the technology itself - it’s the lack of clear leadership intent. This fear, often referred to as FOBO (Fear of Becoming Obsolete), highlights the need for transparent and empathetic leadership.

Building Psychological Safety

Leaders play a crucial role in easing these anxieties by fostering an environment where mistakes are seen as opportunities to learn. Instead of presenting AI as a perfect solution, they should frame its adoption as an ongoing learning journey. Jayshree Seth and Amy C. Edmondson emphasize this point:

Signal that early missteps are valuable learning moments, not failures. Make questioning AI a norm, not a red flag [5].

Boston Consulting Group (BCG) applied this philosophy in 2026. Rather than forcing AI adoption, BCG’s global people chair positioned it as a commitment to employees and clients. The company invested in comprehensive training programs, leading to nearly 90% of employees using AI, with about half engaging with it daily [3]. By acknowledging potential missteps and encouraging feedback, leaders can demonstrate that human judgment remains essential in the AI era [5].

Transparent Communication About AI Transitions

Unclear communication only amplifies employee concerns. When leadership fails to provide clear information, employees often imagine worst-case scenarios about job security and role relevance [6]. As Madison-Davis points out:

Saying 'I understand why this feels unsettling' is more powerful than 'there's nothing to worry about.' The first acknowledges reality while the second dismisses it [6].

Effective communication requires radical transparency. Leaders need to explain what is changing, why it’s happening, and how it will affect roles and futures. This involves sharing detailed implementation timelines, outlining specific tasks AI will handle versus those that remain human-driven, and openly admitting what remains uncertain [6].

Additionally, linking AI literacy to career advancement can shift perspectives. By framing AI as a skill that increases marketability, employees may begin to see it as a tool to enhance their abilities rather than replace them. Research indicates that AI could create approximately 78 million more jobs than it displaces by 2030 [3]. However, this optimism needs to be backed by tangible actions such as retraining programs and transition assistance. When employees see clear pathways to adapt and grow, they’re more likely to embrace AI as an opportunity rather than a threat [6].

Transparent communication and proactive support not only ease fears but also establish a foundation for ethical AI integration within cross-functional teams.

Establishing Ethical Guidelines and Accountability

Once leaders have fostered trust and communicated the purpose of AI integration, the next step is setting clear ethical guidelines. Employees need to see not just why AI is being implemented but also how it will be governed. Without well-defined boundaries and accountability measures, even the best communication efforts can fall short.

Setting Guardrails for AI Usage

The foundation of AI governance lies in establishing key principles. Leaders should focus on five core pillars:

- Fairness: Reducing bias in AI outputs

- Transparency: Explaining how AI reaches its conclusions

- Accountability: Ensuring human ownership of final decisions

- Privacy: Protecting sensitive data

- Security: Addressing system vulnerabilities

These principles can be translated into actionable policies, such as implementing strict identity management, robust encryption, and data anonymization practices [8][9].

Michael Impink, an instructor at Harvard Division of Continuing Education, underscores the importance of accountability with a blunt metaphor:

When something goes wrong, you need a throat to squeeze [9].

One way to ensure accountability is by conducting Ethical Impact Assessments before deploying AI systems. These assessments help identify potential risks and ensure traceability, so every AI-driven action can be audited [7]. Transparency is equally critical - employees and customers should always know when they're interacting with AI and understand its limitations [10].

Balancing AI and Human Decision-Making

As AI transitions from being a tool to a decision-maker, confusion often arises over who is ultimately responsible for outcomes. By early 2026, 78% of enterprise decision-makers reported giving minimal or no autonomy to AI systems capable of making decisions, reflecting a significant trust gap [4]. However, more than 60% of large U.S. enterprises now use AI systems capable of initiating actions within predefined limits [12].

To address this, organizations can adopt one of three oversight models depending on the level of risk involved:

- Human-in-the-loop: Requires human approval for every AI decision, ideal for high-stakes scenarios like hiring or financial approvals.

- Human-on-the-loop: Allows AI to operate independently but with human monitoring and the ability to intervene, suitable for tasks like inventory management.

- Human-in-command: Provides strategic oversight without micromanaging individual decisions [10].

Organizations must also clarify who owns the data, insights, and final outcomes. Tracking override rates - how often humans reject or modify AI recommendations - can serve as a key indicator of trust and model performance [4]. Without clear accountability, responsibility risks becoming fragmented across systems, managers, and employees.

Reducing Risk Through Clear Handoffs

Once decision-making responsibilities are defined, the next step is to minimize risk by clearly documenting the handoffs between AI outputs and human judgment. Ambiguity in these transitions erodes trust more quickly than any technical failure. When team members are unsure where AI's role ends and their own begins, they may either blindly follow flawed recommendations or dismiss valuable insights entirely. Studies show that 40% of knowledge workers accept AI outputs without questioning them, emphasizing the need for clear decision boundaries [13].

To address this, leaders should document which tasks AI can handle independently - such as low-risk, rules-based activities like scheduling - and which require human intervention, particularly high-stakes decisions involving empathy or strategic thinking [12]. Implementing override protocols can further improve accountability. For example, team members could be required to log their reasoning when overriding AI recommendations. This creates a feedback loop that highlights areas where the AI model underperforms or where human intuition adds value [4].

Philip Cooper, VP of Product at Salesforce, highlights the importance of framing AI correctly:

Build trust with business users by showing them AI gives them insight, not mandates [11].

Providing context for AI outputs - such as the data points and predictive factors behind a recommendation - empowers humans to make informed decisions rather than simply following instructions. As HFS Research notes:

The effectiveness of Stage 4 maturity is not that AI is always right. It is that the organization has accepted that AI creates value only when it is allowed to be wrong before it is right [4].

Using Empathy to Enable Cross-Functional Collaboration

Ethical guidelines and clear handoffs may set the stage for AI integration, but they alone won't create alignment. While guidelines provide structure, empathy is what truly brings teams together. Cross-functional teams often consist of individuals with varied expertise, concerns, and ways of thinking. For example, a sales manager might be focused on how machine learning impacts pipeline logic, while an email marketing manager is more concerned with whether AI can boost engagement rates[2]. Empathy bridges these different perspectives.

Understanding Different Team Perspectives

When a data analyst sees AI as a potential threat to their expertise, or a customer service representative worries about being replaced by algorithms, they might experience what's known as a "judgment gap." This is the uneasy feeling that AI is overshadowing years of experience with overly confident outputs[15]. Acknowledging this gap is crucial for building the trust that cross-functional teams need to succeed.

Leaders who take the time to address these fears create an environment where open conversations can happen. Matt Whetton, CTO at Acquired.com, explains:

AI won't break trust by default. But it will expose where trust was already fragile[15].

Rather than dismissing concerns as resistance to change, skilled leaders see them as opportunities to identify areas needing more clarity. This approach requires consistent effort - dedicating time for teams to discuss uncertainties and adapt to evolving roles[5].

Take Klarna as an example. The company initially reduced its workforce, believing AI could handle customer support more efficiently. However, they later reversed course, reassigning staff to customer service roles. CEO Sebastian Siemiatkowski admitted:

investing in the quality of human support is the way of the future[14].

The takeaway? Empathy isn't just a nice-to-have; it's a smart move.

Creating Safe Spaces for Feedback

Psychological safety doesn't happen overnight or through a single town hall meeting. It’s built over time, with leaders consistently demonstrating that mistakes are opportunities to learn. Experts like Jayshree Seth and Amy C. Edmondson suggest that leaders can foster this environment by admitting when AI outputs confuse them and encouraging their teams to share challenges. This helps normalize the process of learning from errors[5].

One actionable strategy is to make AI usage visible. Encourage team members to disclose when they’ve used AI in their work - whether in pull requests, project updates, or team meetings - just as they would acknowledge a colleague’s contribution. This kind of transparency builds trust[15]. Another approach is introducing "smart failure protocols", which distinguish between thoughtful experiments (like testing AI in low-risk scenarios) and careless mistakes. Sharing lessons from these experiments openly helps the entire team grow[5].

Clear communication about AI’s purpose also eases anxiety. Make it explicit that AI is there to reduce friction, not to monitor productivity or evaluate individual performance. Ambiguity about how AI data is used can lead to disengagement. When employees know that humans remain responsible for final decisions - even with AI involved - they feel more confident in their roles[15].

Learning and Improving Through Iteration

Transparent communication and ethical oversight lay the groundwork, but shared learning rituals speed up AI integration. The most effective implementations don’t come from top-down directives. Instead, they emerge from team-driven experiments where members test, reflect, and refine together. Starting with small pilot projects - like using AI to summarize emails or draft client letters - helps teams build confidence gradually[2].

After-action reviews are especially helpful. Regularly discussing where AI succeeded or fell short, and framing these sessions as opportunities for collective reflection rather than performance critiques, fosters continuous improvement[5].

Research underscores the value of this approach. AI-augmented cross-functional teams are three times more likely to generate top-tier ideas compared to individuals working without AI[14]. As Harvard Business School researcher Dell'Acqua notes:

If you want to empower an individual to be as effective as a team, give them AI. But if you want to be in that top 10% of performers, a full human team plus AI seems like the recipe for success[14].

The goal isn’t to replace human judgment - it’s to enhance it through collaboration. By prioritizing empathy, teams can integrate AI in ways that not only work but thrive.

Co-Creating AI Integration for Long-Term Alignment

When it comes to successfully integrating AI into an organization, involving employees in the process from the start is critical. This approach not only strengthens trust but also ensures AI enhances human expertise rather than replacing it. By engaging employees in selecting and refining AI tools, companies can foster a sense of ownership that top-down decisions often fail to achieve. After all, no one understands the daily challenges and workflows better than the people who live them.

Involving Teams in AI Tool Selection and Testing

Successful AI implementation isn’t just about technology - it’s about people. The 10/20/70 rule emphasizes this: 10% of the effort is dedicated to algorithms, 20% to data and infrastructure, and a whopping 70% to people, processes, and change management [16]. This is where real value is created. As Amie Harpe, Founder and Principal Consultant at Sakara Digital, points out:

"The algorithm and model work is real and important - but it is rarely the binding constraint on whether the program produces business value" [16].

One way to ensure success is by forming cross-functional pilot groups. These teams, drawn from departments like sales, IT, compliance, and customer service, represent everyone who will interact with the AI. For example, Procter & Gamble conducted a field experiment between May and July 2024 with 791 professionals across its baby care, grooming, and oral care units. Using an internal GPT-4 tool, these teams were three times more likely to generate top-tier product ideas and reduced development time by 13% [20].

To ease into AI adoption, pilot groups should start with low-stakes scenarios. By using tools like interviews and journey mapping, teams can pinpoint real pain points and workflows. For instance, automating tasks like summarizing emails or drafting client letters can help employees see AI as a supportive partner rather than a threat [18]. Regular calibration sessions can also help normalize errors and turn them into learning opportunities [2].

Another effective strategy is implementing "second opinion" workflows. In these setups, humans review AI-generated recommendations and decide whether to accept or override them, creating a feedback loop that improves both human trust and AI performance over time [2].

Empowering Teams Through Shared Ownership

AI integration isn’t a one-and-done process - it requires ongoing collaboration. Companies that adapt their strategies based on employee feedback are four times more likely to succeed in their transformation efforts [17].

One practical way to encourage this is by appointing AI champions. These are employees who are enthusiastic about AI and capable of mentoring their peers. Peer-led coaching often builds more trust than directives from leadership [19][17]. Chris Tillett, Chief Technology Officer at McChrystal Group, emphasizes:

"Adoption is critical because it also trains the AI models in the types of data sources that need to be adjusted and improves the quality of outputs" [18].

Leaders can further shift mindsets by positioning AI as a "teammate" rather than just a tool. This involves creating feedback loops where AI outputs are refined based on human judgment and priorities [17]. Updating job descriptions to include AI collaboration - like "manages AI-assisted analysis" - can also help solidify this perspective.

Transparency is another key factor. Sharing performance data that highlights where AI falls short helps build trust by showing employees that humans remain accountable for final decisions [16].

| Strategy | Focus Area | Outcome |

|---|---|---|

| Shared Consciousness | Alignment | Ensures all levels understand AI's role [18] |

| Tiger Teams | Problem Solving | Multidisciplinary groups resolve bottlenecks [1] |

| AI Champions | Culture | Peer-led coaching drives organic adoption [19][17] |

| Teammate Model | Workflow | AI becomes an iterative collaborator [17] |

By empowering teams, organizations not only ensure smoother AI adoption but also enhance the human skills that set them apart.

Building the Human Moat

Co-creating AI solutions does more than speed up adoption - it strengthens what Seth Mattison calls the "Human Moat." This concept refers to the human qualities - like empathy, context, and nuance - that differentiate organizations in an increasingly automated world. While AI excels at handling routine tasks and data patterns, humans bring the depth and understanding that build trust and resilience [2].

Some companies initially leaned too heavily on AI for customer service, only to realize the importance of human involvement. Reassigning workers to customer-facing roles highlighted that while AI can double productivity for routine tasks, achieving top-tier performance requires AI-augmented teams [14]. As Harvard Business School researcher Fabrizio Dell'Acqua explains:

"If you want to be in that top 10% of performers, a full human team plus AI seems like the recipe for success" [20].

To build this Human Moat, organizations must design processes where AI enhances human strengths rather than replacing them. This often involves "intelligent disobedience", or the ability to challenge AI outputs with human judgment [2]. Andrew Royal, Founder & Principal at Fullstack RevOps, sums it up well:

"The organizations that will dominate the next decade are not building AI to replace their people. They are building AI to make their people irreplaceable" [2].

Conclusion

Role transitions often falter, not because of technology, but when trust begins to crumble. As Matt Whetton, CTO at Acquired.com, explains:

AI won't break trust by default. But it will expose where trust was already fragile [15].

Forward-thinking leaders understand that successfully integrating AI isn't solely about algorithms or systems - it's about prioritizing people, ensuring transparency, and maintaining accountability.

In this new age of AI-driven automation, where routine tasks are increasingly handled by machines, professional value lies in qualities like judgment, contextual understanding, and ethical decision-making - not just execution. For instance, Goldman Sachs predicts that AI could automate tasks equivalent to 300 million full-time jobs globally [21]. Yet, the organizations that will excel aren't those that simply replace people, but those that make their teams indispensable by fostering empathy, nuanced decision-making, and a focus on solving meaningful problems. This concept, often referred to as the Human Moat, underscores the irreplaceable skills that set high-performing teams apart.

To build this strength, organizations must clearly define what AI will and won’t do, while ensuring that ultimate accountability remains with humans. When employees feel safe disclosing their use of AI, trust grows. Encouraging individuals to value their own judgment and actively participate in shaping the tools they use further reinforces this trust.

On the other hand, trust erodes when responsibilities become unclear [15]. The best way to safeguard it is by keeping judgment, craftsmanship, and accountability firmly within human hands - leveraging AI as a tool to enhance, not overshadow, their purpose.

As Seth Mattison's insights emphasize, anchoring decisions in distinctly human perspectives is the cornerstone of maintaining trust and achieving a lasting competitive edge in the AI era.

FAQs

How do we define what AI owns vs what humans own?

Defining the roles of AI and humans starts with recognizing their unique strengths. AI shines in automating repetitive tasks, processing vast amounts of data, and identifying patterns or insights at incredible speed. On the other hand, humans contribute judgment, ethical reasoning, and a nuanced understanding of context - things machines can't replicate.

When it comes to trusting AI, transparency and clear boundaries are key. AI can manage routine operations efficiently, but humans must remain at the helm for decisions that require moral and ethical considerations. It's up to leaders to ensure AI serves as a tool to enhance human capabilities, not as a substitute for the critical thinking and oversight only people can provide.

What’s the fastest way to reduce FOBO and resistance?

The quickest way to ease FOBO (Fear of Better Options) and resistance is by establishing trust through transparency. Make an effort to involve teams in the process of adopting AI, clearly explain its purpose and potential impact, and ensure continuous training is available. These actions can create a sense of alignment and help teams feel more assured and involved during AI-related changes.

How can we measure whether teams actually trust the AI?

Teams can gauge their trust in AI by looking at a few key factors: how much autonomy they retain in decision-making, the degree to which AI shapes their actions, and their overall confidence in the results AI delivers. The HFS AI Trust Curve offers a structured way to chart this trust journey. It starts with building initial confidence in AI models and progresses toward fully integrating and depending on AI insights for making decisions.