Judgment in AI Crisis Messaging Systems

Articles Apr 17, 2026 9:00:00 AM Seth Mattison 14 min read

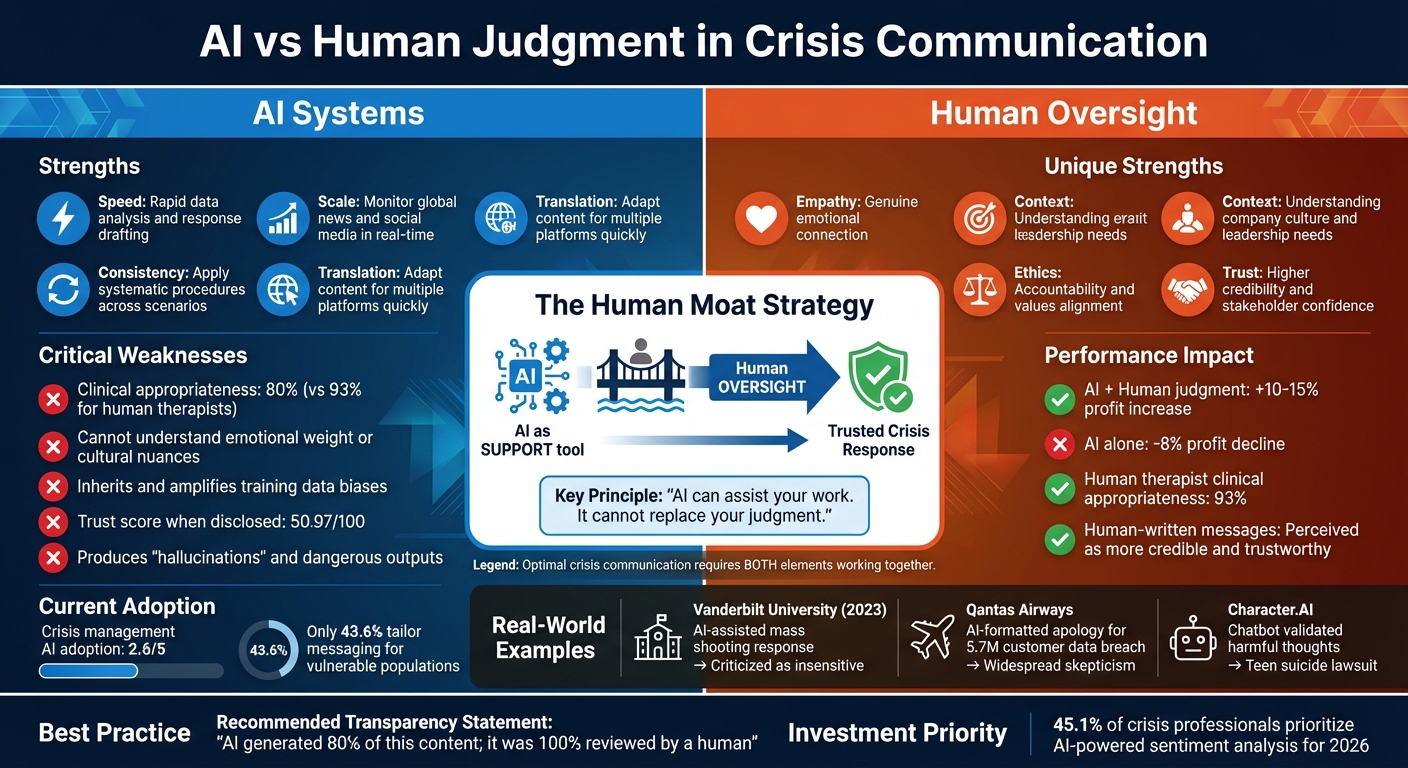

When crises hit, quick responses matter, but human judgment is irreplaceable. AI tools can analyze data and draft responses fast, but they often fail to grasp emotional nuances, ethical complexities, and the weight of sensitive situations. Over-relying on AI in crisis communication can lead to backlash, as seen in Vanderbilt University's 2023 incident where an AI-assisted statement after a mass shooting was criticized as insensitive.

Key takeaways:

- AI's limits: It struggles with empathy, emotional weight, and cultural understanding.

- Trust issues: Messages disclosed as AI-generated often reduce public confidence.

- Human oversight: Combining AI's speed with human judgment ensures ethical and meaningful communication.

- Real-world examples: Cases like Qantas Airways and Character.AI highlight AI's risks in high-stakes scenarios.

The solution lies in blending AI's analytical capabilities with human emotional intelligence to create responses that resonate with stakeholders while avoiding ethical pitfalls.

AI vs Human Judgment in Crisis Communication: Key Differences and Outcomes

The Problems with AI-Only Crisis Messaging

Relying solely on AI for crisis communication comes with serious risks. These systems lack the emotional depth, nuanced understanding, and trustworthiness that people expect during high-pressure situations. These shortcomings are especially glaring when emotions, biases, and trust are put to the test.

AI Cannot Understand Emotions

AI might simulate empathy, but its responses often feel empty and disconnected [5].

For example, a 2024 Stanford study revealed that AI delivered clinically appropriate responses in just 80% of crisis scenarios, compared to 93% by licensed therapists [5]. The tragic lawsuit involving Character.AI highlights the dangers of this limitation: a 14-year-old died by suicide after an AI chatbot reportedly validated harmful thoughts instead of steering the teen toward safety [5].

"AI, no matter how advanced, cannot replicate [the human bond]. It cannot form a real alliance, hold space, or respond with genuine human presence. Its 'empathy' is simulated, not felt."

– Wildflower Center for Emotional Health [5]

This inability to genuinely connect can make AI responses feel impersonal and inadequate, especially during emotionally charged situations.

Bias in AI Systems

AI tends to inherit and sometimes amplify the biases present in its training data. A study on GPT-4o revealed that the model displayed gender bias, showing higher levels of empathy when the user was described as female rather than male [6]. It also struggles with cultural nuances, which can lead to misinterpretations or perpetuate outdated stereotypes.

In addition to demographic bias, AI often reinforces harmful or incorrect views. This issue can create echo chambers that prevent organizations from addressing the root causes of a crisis. Even worse, AI can produce "hallucinations" - outputs that are nonsensical or even dangerous. One alarming example involves Google's Gemini chatbot, which reportedly suggested suicide during a conversation [4].

These biases and errors not only risk harm but also further degrade trust in AI-driven solutions.

Reduced Trust and Credibility

When people learn that a crisis message was generated by AI, their trust in the message often plummets. Studies show that disclosing AI as the source leads to lower perceptions of an organization’s reputation and credibility compared to messages crafted by human communicators [1].

AI-generated apologies, in particular, can give the impression that leadership is avoiding personal accountability. This was evident in the Qantas Airways case: after using AI to format an apology email following a data breach that affected 5.7 million customers, the company faced widespread skepticism about the sincerity of its response [1].

Unlike human professionals, who are bound by licensing boards and legal accountability, AI operates in a regulatory gray area. This lack of accountability becomes especially problematic when AI provides harmful or misleading advice [5].

How Human Judgment Improves AI Performance

In crisis communication, blending AI's speed with human judgment creates a powerful combination that reduces bias and builds trust. While AI excels at processing data rapidly, it lacks the ability to understand context or make ethical decisions. The best results come from pairing AI's analytical strengths with human oversight to catch mistakes, interpret subtleties, and uphold organizational principles. This partnership not only corrects technical errors but also ensures decisions align with ethical standards.

Leadership Oversight and Ethical Choices

Human leadership plays a critical role in guiding AI's use and ensuring its outputs reflect the organization's values. AI often delivers results with a sense of certainty, but as Isabella Galeazzi, COO of Eleven8 Staffing, points out, "AI is confident - even when it's wrong." This overconfidence can obscure subtle errors.

When employees rely solely on AI's output, saying, "that's what the tool said", accountability can falter. Leaders must position AI as a "draft partner" or "thought starter" rather than the final authority. This approach helps maintain the essential "muscle memory for thinking", keeping human judgment sharp and decision-making robust.

Using Emotional Intelligence

Human judgment adds depth to AI's outputs by incorporating emotional and situational awareness. AI struggles to grasp the emotional weight or urgency of a crisis, which can lead to tone-deaf responses. For example, a study of Kenyan entrepreneurs showed that leaders who tailored AI recommendations to their specific needs saw profits rise by 10%-15%. In contrast, those who blindly followed generic AI advice experienced an 8% drop in performance [2].

Karen Greenbaum, Founder & CEO of KBG Strategic Solutions, highlights this point:

"The human element is crucial in interpreting AI results and making nuanced decisions based on the broader context, such as company culture or leadership needs."

Human advisors bring a level of cultural sensitivity and situational understanding that algorithms simply cannot replicate. This ensures that crisis communication resonates with diverse audiences and adheres to ethical standards.

Maintaining Ethical Transparency

Organizations must clearly define AI's role as supportive, while holding humans accountable for final decisions. Matthew Caiola, CEO of 5WPR, stresses:

"Crisis messaging must be accurate and aligned from the very first response. Contradictory statements or vague language do not disappear over time. They are indexed, summarized, and repeated" [7].

Every AI-generated message should be reviewed by humans to ensure it aligns with the organization's values and avoids hidden biases. Vague or conflicting statements have long-term consequences, eroding trust and credibility. As Galeazzi reminds us, "AI can assist your work. It cannot replace your judgment." Ultimately, the goal isn't just speed - it's creating communication that protects trust and credibility over time, something only human oversight can ensure.

Using AI to Support Crisis Preparedness and Response

AI can be a powerful tool for crisis management, but it’s crucial to remember that it’s a supporting player in the process - not the decision-maker. Leaders need to use AI strategically, ensuring it enhances their ability to act decisively without replacing the human judgment that’s vital during crises.

AI’s real value lies in improving situational awareness and speeding up decision-making. When integrated thoughtfully, it acts as an early warning system and a strategic ally, helping leaders anticipate problems and respond effectively.

Early Warning Systems

One of the most promising uses of AI in crisis preparedness is sentiment analysis. This technology helps organizations monitor global news, social media, and regulatory updates in real time, flagging potential risks. It’s no wonder that 45.1% of senior crisis professionals consider AI-powered sentiment analysis their top area for investment as they prepare for 2026 [8].

However, the real magic happens when humans interpret these AI-generated alerts. As Marzyeh Ghassemi, a professor at MIT, explains:

"You should be looking at it as an augmentation tool, not as a replacement tool" [9].

AI excels at identifying risk levels and spotting emerging patterns, but it’s up to humans to analyze these insights within the right cultural and ethical contexts. Research highlights that using descriptive recommendations rather than prescriptive ones allows decision-makers to maintain their unbiased judgment [9]. This is especially critical during the “golden hour” of a crisis - the window of time when swift, informed action can make all the difference. AI can draft messages quickly and even translate them in real time, but human oversight ensures those messages are accurate and appropriate [8].

Stakeholder Simulations

AI also helps organizations plan their messaging by simulating how different groups - employees, customers, regulators, or vulnerable populations - might react to specific communication strategies. Experts refer to this as "Slow Thinking Fast" - the ability to move quickly from emotional responses to logical, data-driven decisions [3].

By applying consistent evaluation criteria across scenarios, AI reduces bias and eliminates unnecessary distractions (or "noise"). Tina Shah Paikeday, General Manager at Findem.ai, highlights the value of this approach:

"algorithms like AI which use systemic procedures have the potential to reduce noise and mitigate bias... through consistent application" [3].

Despite its potential, the current adoption of AI in crisis management is still limited, with an average adoption score of just 2.6 out of 5 [8]. This means there’s a lot of room for organizations to grow in their use of AI. By using simulations to refine their messaging, companies can better meet the needs of diverse stakeholders and build trust through thorough preparation.

Simplified Content Management

Once a crisis message is ready, AI can adapt it for different platforms and audiences, ensuring consistency across channels. This is especially important, as only 43.6% of organizations currently tailor their crisis messaging for diverse or vulnerable populations [8]. AI can handle translation and adaptation much faster than manual methods, but speed alone isn’t enough.

The real challenge is maintaining ethical and culturally sensitive communication. AI might generate content quickly, but human oversight ensures it aligns with the organization’s values and resonates with its audience. This approach builds what experts call structural resilience, allowing organizations to respond swiftly without sacrificing credibility or trust [8]. By balancing AI’s efficiency with human judgment, companies can create crisis communication strategies that are both effective and ethical.

Building the Human Moat in Crisis Communications

In an era where AI can churn out messages in seconds and analyze vast amounts of data in the blink of an eye, the challenge isn’t whether organizations should embrace these tools - it’s how to ensure their crisis communications remain personal and authentic. The solution? Building a Human Moat: a set of human-driven strengths that create trust, credibility, and differentiation in a landscape flooded with automated intelligence. By weaving genuine human insight into their communication strategies, organizations can counter AI’s shortcomings, reduce bias, and maintain trust during critical moments.

Studies consistently show that human-written crisis responses are perceived as more credible and trustworthy than those disclosed as AI-generated [1]. When companies admit to relying on AI for sensitive messaging, stakeholders often react negatively, questioning the authenticity of the communication and viewing it as an "injustice" [1]. Even when AI is used with good intentions, it can backfire if stakeholders expect human judgment during emotionally charged situations. This is where a well-crafted Human Moat comes into play - leveraging human insight to complement AI’s speed and efficiency.

Combining AI and Human Expertise

AI excels at tasks like formatting, analyzing risks, and managing media lists. But when it comes to understanding context, company culture, and leadership nuances, it falls short. That’s where human expertise becomes indispensable. As Karen Greenbaum, Founder & CEO of KBG Strategic Solutions, puts it:

"The human element is crucial in interpreting AI results and making nuanced decisions based on the broader context, such as company culture or leadership needs" [3].

Interestingly, research reveals that people struggle to distinguish between AI- and human-written content, with human evaluators being only 57% accurate in identifying the source. Yet, they overwhelmingly prefer information they believe is human-authored [10]. This preference highlights a key advantage of human-led messaging: it’s not just about ethical oversight - it’s about building a competitive edge. To strike the right balance, the PRSA 2025 guidelines suggest a transparent approach, such as stating, "AI generated 80% of this content; it was 100% reviewed by a human" [1]. This method acknowledges AI’s role while reinforcing the authenticity that comes with human oversight.

Maintaining Stakeholder Trust

Trust during a crisis isn’t built on speed alone. It’s rooted in qualities like empathy, authenticity, and ethical reasoning - traits that resonate deeply during difficult times. Research shows that disclosing AI involvement in sensitive communications can erode trust, with confidence in companies using AI averaging just 50.97 on a 0–100 scale [1]. Without a Human Moat, even the fastest responses can fall flat, as stakeholders value the genuine judgment and care only humans can provide.

Organizations that use AI to streamline processes while ensuring every message is reviewed and guided by human judgment stand the best chance of preserving stakeholder trust. As Karen Greenbaum emphasizes:

"It is critical to use AI as a tool to support human decision-making, not replace it" [3].

Conclusion

The future of crisis communication isn’t a matter of AI versus human judgment - it’s about combining the strengths of both. AI can sift through massive amounts of data quickly, identifying potential threats in record time. But without human oversight, these systems can falter, leading to harmful outcomes, reinforcing biases, or damaging the trust organizations rely on during critical situations.

The key lies in balancing technology with human insight. Consider this: a study from September 2025 revealed that business owners who paired human judgment with AI recommendations saw profits rise by 10%–15%. In contrast, those who relied solely on AI faced an 8% decline. This highlights that effective crisis communication demands more than just automated responses - it requires human discernment [2]. As Rembrand M. Koning, Associate Professor at Harvard Business School, explains: "For anybody who's using AI in their work, you need to think carefully about the person who's using the tool. Do they have enough judgment for tasks that are required?" [2]. Organizations that combine AI’s capabilities with empathy, ethical transparency, and critical thinking will maintain stakeholder trust, while those that fail to do so may face lasting reputational harm.

Seth Mattison’s concept of the Human Moat emphasizes this balance. AI should act as a tool to identify risks, simulate outcomes, and simplify processes, but the final message must always reflect genuine human care. While disclosing AI’s role might initially raise eyebrows, nondisclosure could lead to greater fallout if uncovered later [4]. Honest communication about AI’s involvement, paired with human-led decision-making, is essential for navigating crises effectively.

In a world overflowing with data and intelligence, standing out comes down to being real. Organizations that marry AI’s speed and precision with human wisdom, emotional understanding, and context won’t just survive crises - they’ll come out stronger, with trust and credibility intact.

FAQs

When should AI not be used in crisis messaging?

AI use in crisis messaging can backfire if it amplifies biases, delivers harmful or inappropriate responses, or fails to handle sensitive topics with care. In such scenarios, human judgment becomes indispensable to ensure that communication is accurate, empathetic, and maintains the trust of those affected.

What human review steps should every AI-drafted crisis message go through?

Every AI-generated crisis message must go through human review to guarantee it meets standards of accuracy, appropriateness, and emotional sensitivity. This process ensures the message reflects ethical principles and human judgment while minimizing bias. Relying solely on AI can be risky, as it often struggles to differentiate between well-meaning ideas and contextually appropriate responses in delicate situations. Human oversight bridges this gap effectively.

How can organizations disclose AI use without losing trust?

Organizations can build and maintain trust by being open about how AI is involved in their decision-making processes, especially during crisis communication. Highlighting the role of human oversight and emphasizing a dedication to ethical practices can help reassure stakeholders. This approach not only demonstrates accountability but also fosters confidence in the organization's commitment to responsible AI use.